Setting AI Visibility KPIs and OKRs

Which AI visibility goals actually hold up as team OKRs, and which ones let you claim a win without moving any real outcome?

The fastest way to ruin an AI visibility program is to set mention rate as an OKR. Mention rate goes up when you run more queries against broader topics. It rewards activity that nobody cares about. Good AI visibility OKRs use leading indicators (mention rate, citation rate) as KPIs you monitor, and outcome metrics (share of voice on priority queries, pipeline influenced) as the actual goals. Get that distinction right and your quarterly reviews stop arguing about methodology and start arguing about strategy.

KPIs vs OKRs: Why the Distinction Matters

KPIs and OKRs are not interchangeable. One is a gauge. The other is a destination. Conflating them is why AI visibility programs often show "success" on the dashboard but no impact on the business.

- KPIs are ongoing health metrics. You watch them. You don't set targets for them except as tripwires.

- OKRs are quarterly or annual goals. They should be specific, measurable, and tied to an outcome the business cares about.

If your AI visibility program treats mention rate as an OKR, anyone on the team can juice the number by querying broader topics. Treat it as a KPI and the team focuses on moving the real outcome metric underneath it.

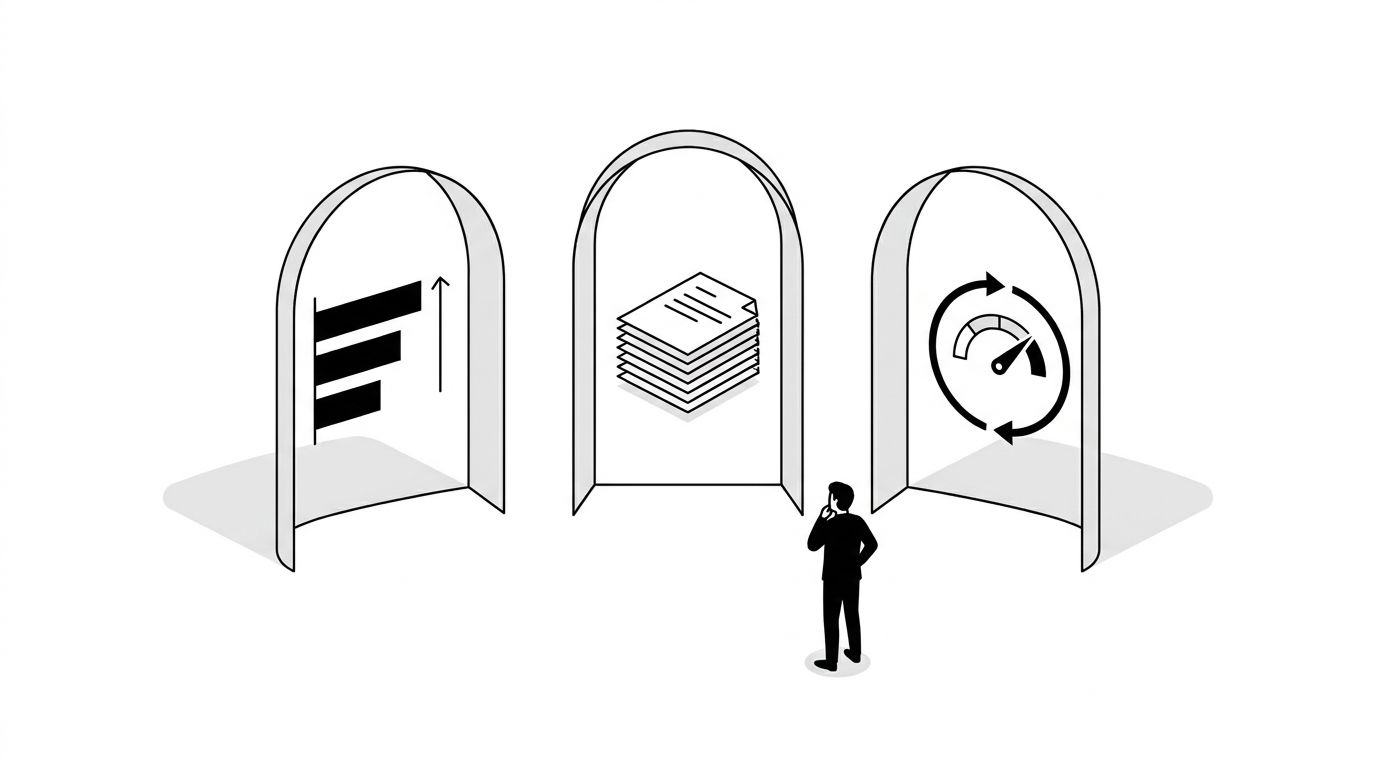

The KPI Layer: What to Monitor Continuously

Monitor these. Don't set explicit targets beyond baseline health.

- Mention rate. Total mentions of your brand per 100 AI queries in your category.

- Citation rate. Percent of AI responses where your domain is cited in the source list.

- Sentiment distribution. Percent positive, neutral, and negative of brand mentions.

- Query coverage. Percent of priority queries where you appear in at least one AI response.

The AI visibility metrics guide covers the exact definitions and measurement approach. Watch these weekly. Alert on anomalies. Don't build quarterly targets around them. If you don't have a place to display this layer cleanly, our AI visibility dashboard template gives you the layout to start from.

The OKR Layer: What to Actually Commit To

Your OKRs need to be outcome-oriented. The three that consistently hold up across programs I've helped set up.

- Share of voice on priority queries. Define 10 to 30 category-level queries your target buyers would actually ask. Target a specific share of voice across that set (for example: move from 22% to 35% by end of quarter). This is the metric that maps closest to market share.

- AI-influenced pipeline. Track referral traffic from AI platforms plus branded search lifts that correlate with AI mentions. Target a dollar figure tied to pipeline or revenue.

- Negative mention resolution. Count brand misrepresentations and compliance-relevant statements from AI. Target a percent resolved within a defined window (for example: 80% of material misrepresentations corrected within 30 days of detection).

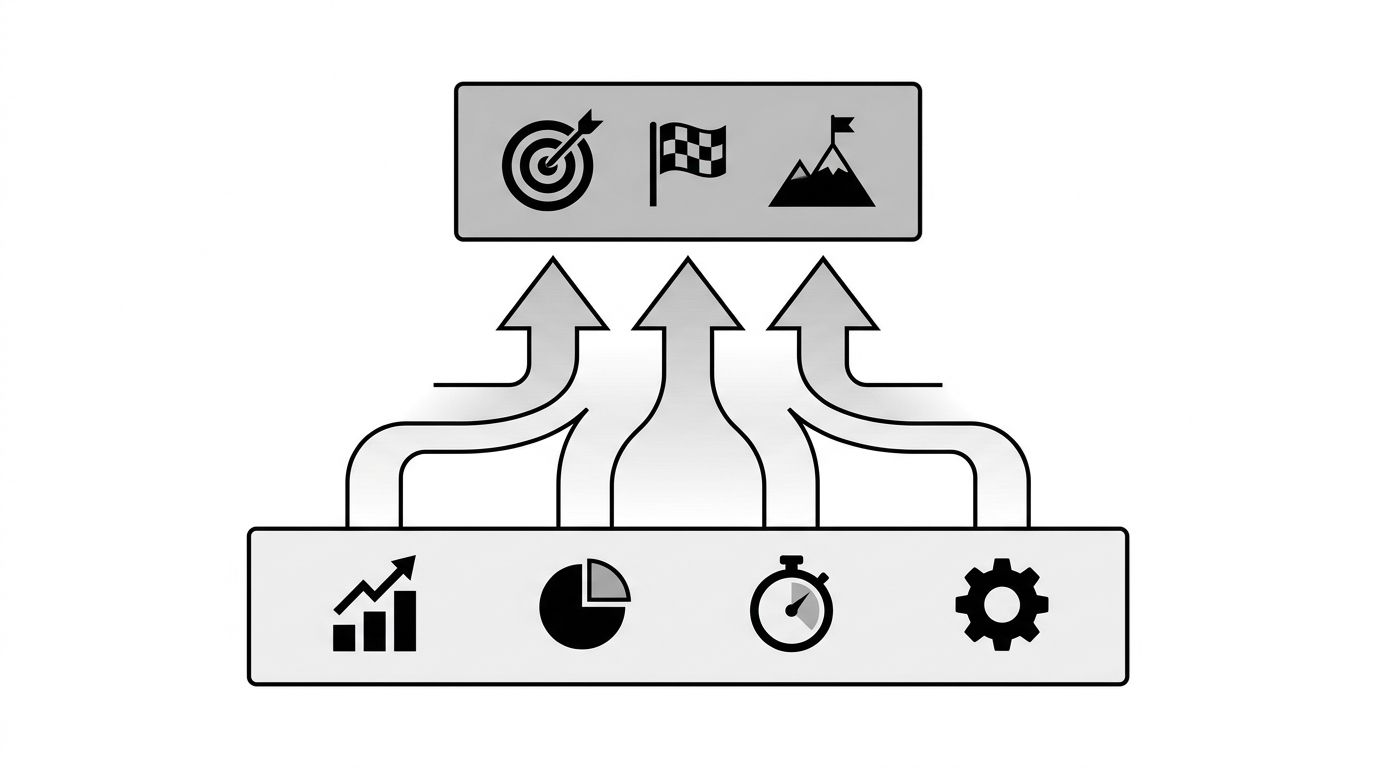

The image below shows how the KPI layer feeds into the OKR layer.

Setting Good Targets

Targets for AI visibility OKRs should be derived from a baseline plus a realistic improvement rate. Don't set arbitrary stretch goals. Look at what brands similar to yours have achieved over three to six months of concentrated work.

- Share of voice: expect a 5 to 15 percentage point improvement per quarter on priority queries with active optimization. Larger jumps usually mean competitors collapsed, not that you did something uniquely right.

- AI-influenced pipeline: expect a 20 to 60% year-over-year growth for brands that weren't actively measuring before. The big gains come from measurement itself, not optimization.

- Negative mention resolution: 70% in 30 days is strong. Higher is possible but requires coordinated content and PR work.

Our calculate-geo-roi guide covers the math for setting these targets based on your specific category and budget.

How to Run the Quarterly Review

Three questions at each review. Skip everything else.

- Did we move share of voice on priority queries? If no, why, and what changes for next quarter?

- Did AI-influenced pipeline grow? If no, is the issue attribution, volume, or conversion?

- Which negative mentions did we resolve, and which are still open?

Anything else belongs in a separate operational review. The OKR review should be 30 minutes on outcomes.

Our executive reporting guide covers how to package this for C-suite audiences. For enterprise rollouts, the enterprise solution page outlines how to align this work across multiple business units and regions.