Monitoring Brand Misinformation Across ChatGPT, Gemini, and Perplexity

Most teams monitor AI brand misinformation by hand-checking three to five prompts a week, which misses the long tail where misinformation usually lives. The fix is a monthly stratified sample of your full prompt set, split across three buyer-intent layers: awareness, consideration, and comparison. Misinformation at the comparison layer is the most expensive of the three. That is the layer where buyers are eliminating options, and a wrong fact about pricing or feature parity removes you from the shortlist before a sales conversation ever happens. If you only have time to monitor one layer, monitor that one.

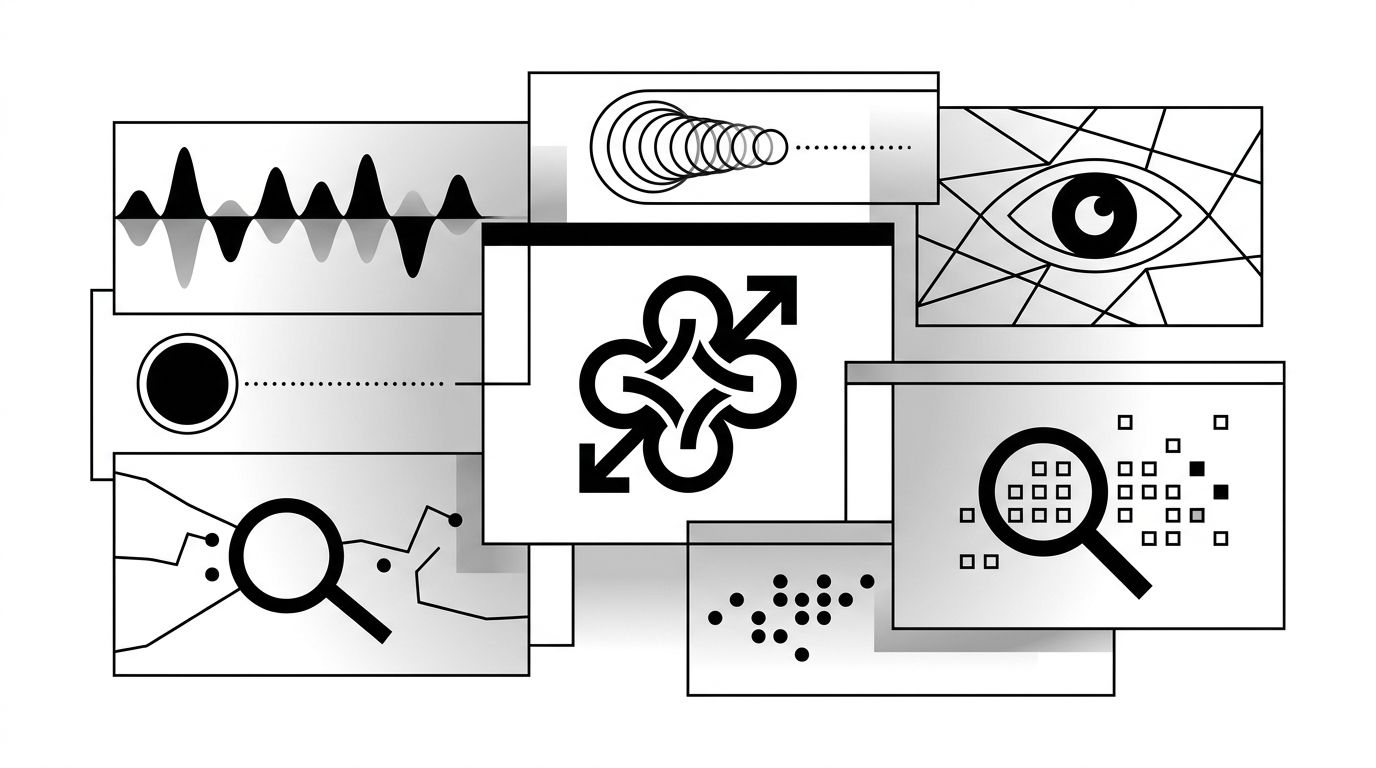

What monitoring actually means at AI-platform scale

Monitoring is not a weekly check on the homepage prompt. The prompt set that reflects how buyers actually research your category is closer to 80 to 200 unique queries, distributed across awareness, consideration, and comparison intent. Each query needs to run on at least four platforms (ChatGPT, Gemini, Perplexity, and Google AI Overviews), which means a real monitoring surface is four-figure responses a month.

Hand-checking a handful of those is theatre. You will catch obvious hallucinations and miss the costly ones, the misattributed feature in a comparison answer or the outdated pricing in a "best tools for X" list.

The work is to build a sampling system that produces a representative read on the surface, not a complete one. Sampling is how every other large-scale quality function (manufacturing QA, fraud detection, polling) handles the same problem. Apply the same logic here.

The three layers where misinformation hurts

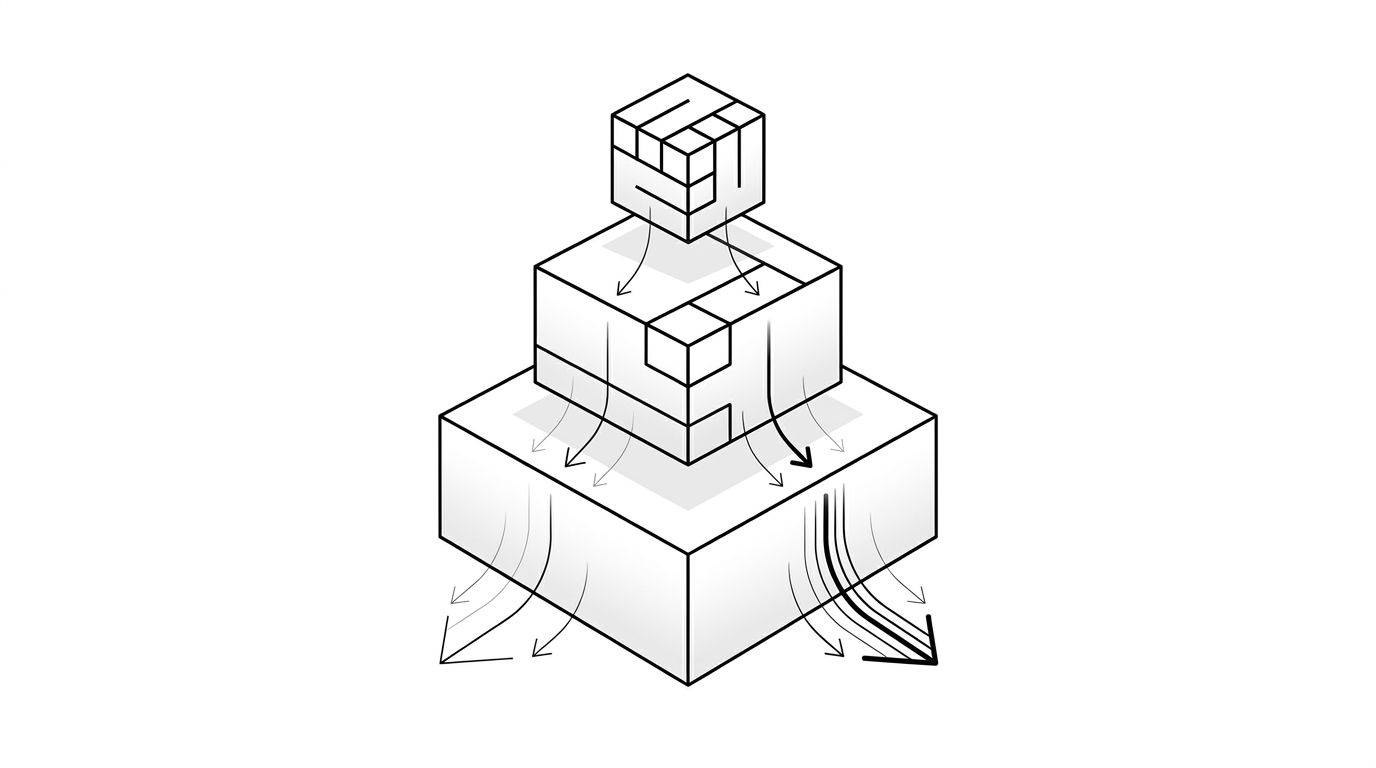

The diagram above shows the three intent layers and how the cost of bad information rises as the query moves closer to purchase.

Awareness. Queries like "what is generative engine optimization" or "how do AI search engines work." Misinformation here is annoying but rarely fatal. The buyer is forming a mental model, not picking a vendor. A wrong fact about your category is a long-term brand problem, not a deal-cycle problem.

Consideration. Queries that name a problem and ask for approaches: "how do I track brand mentions in ChatGPT," "tools for AI visibility audits." Misinformation here pushes buyers toward the wrong solution shape. If an AI says your product does not handle something it does, the buyer never adds you to their evaluation list.

Comparison. Queries that name vendors directly: "Geology vs [competitor]," "best AI visibility platforms for enterprise." Misinformation here is the most expensive class. A wrong price point, a missing integration, a stale customer logo, any of these cost a slot on the shortlist. The buyer makes the cut decision based on the AI answer and never tells you they did.

The implication for monitoring is straightforward. Comparison-layer queries deserve weighted attention even when they are a small share of your total query volume. They are the queries where errors map directly to lost pipeline.

Setting up a weekly cadence: what to check, what to ignore

A working cadence has two tracks running in parallel.

The monthly stratified sample is the load-bearing piece. Pull a sample of 60 to 100 queries from your full prompt set, weighted toward comparison and consideration. Run them across all four platforms. Log every factual claim about your brand and competitors. Flag anything wrong, outdated, or mis-attributed. This catches the long tail.

The weekly trigger check is the smaller piece. Pick 10 to 15 high-stakes queries (the comparison ones, plus any branded searches that drive measurable traffic). Run them weekly. The point is not coverage. The point is to catch a sudden regression, the kind that follows a model update or a competitor shipping new content that swaps your positioning.

Things to ignore in your monitoring:

- One-off prompt variations that no real buyer would type

- Personality questions ("is [brand] a good company?") unless reputation is your specific concern

- Platform-specific quirks that disappear after one model refresh

Citation tracking sits next to this work. If you want a more granular read on which sources AI platforms are pulling when they answer your queries, see our piece on tracking AI citations. The two streams reinforce each other: misinformation monitoring tells you when something is wrong, citation tracking tells you which source is feeding the wrong answer.

When monitoring becomes correction

Monitoring is a detection function. The moment a misinformation case clears your noise threshold (same wrong claim, two platforms, two weeks), it shifts to a correction workflow. That is a different motion with a different playbook, and our hub guide on responding to AI misinformation covers the four response patterns in detail.

For enterprise brands with reputation risk at scale, the monitoring-to-correction pipeline becomes a named function with a designated owner, an escalation path, and a quarterly review of what slipped through. The cost of skipping that structure is opaque. You do not see the lost deals because the buyer never gets on a call. Our enterprise solution is built around closing that gap, monitoring at the cadence above and routing surfaced issues into the correction workflow without the four-week delay most ad-hoc setups produce.

The smaller the team, the more important the cadence. A monthly stratified sample plus a weekly trigger check fits inside a few hours of work if the prompt set is stable and the logging is automated. That is the floor. Below it, you are guessing.