Misinformation in AI Responses: What Brands Can Do About It

When an AI model says something wrong about your brand, the right response is rarely a takedown request. It is a source-layer correction. Models do not have a central database you can edit, they reassemble answers from the web every time. The only durable fix is to flood the specific claim area with accurate, dated, citable content on your own domain and on authoritative third-party sites. A legal letter changes nothing in the next response cycle. A content strategy that targets the exact wrong claim does. This shifts misinformation response from a PR problem into a content engineering problem, which most brands are not set up for.

Why Takedown Requests Usually Fail

OpenAI, Anthropic, and Google offer reporting channels, but these are built for policy and copyright issues, not factual errors about businesses. Even when a report is acted on, the correction applies to one model version and can reappear after retraining.

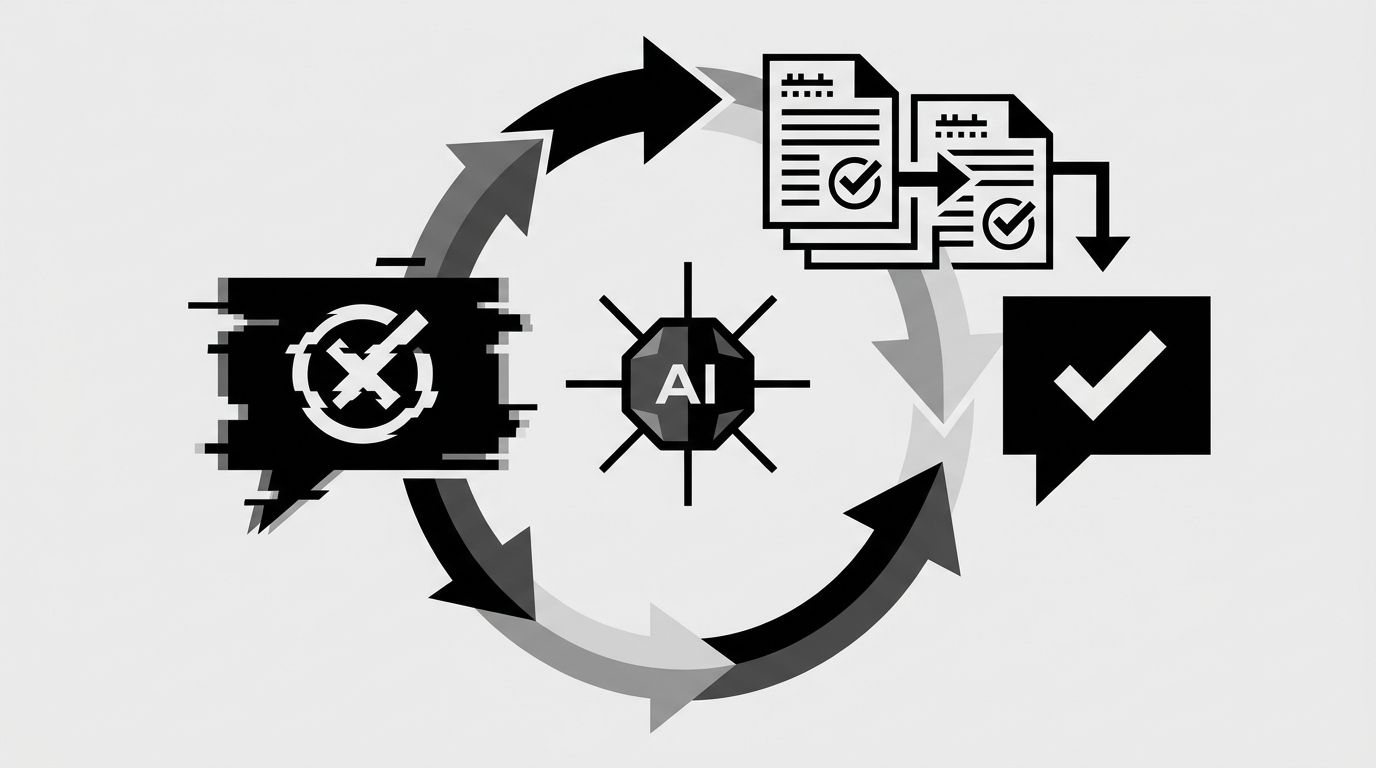

The mechanism producing the bad answer is retrieval plus generation. The model pulled a claim from a forum post, an outdated article, a competitor comparison page, or a hallucination reinforced by thin training data. Unless you address the source layer, the answer keeps resurfacing. Our piece on what happens when AI gets your brand wrong covers the mechanics.

The Four Patterns of AI Misinformation

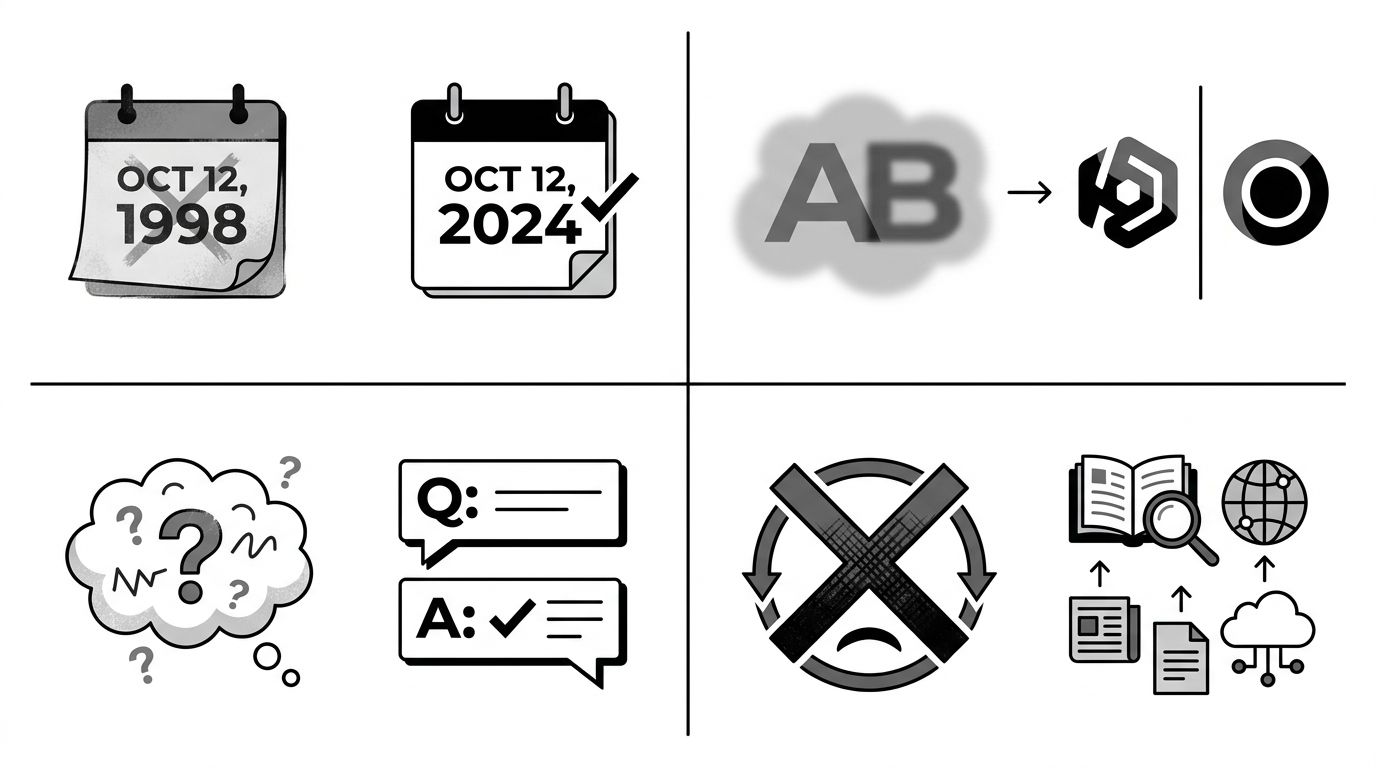

Most brand-related errors fall into one of four categories. The response differs for each.

- Outdated facts. Pricing, features, leadership, or locations that changed. The fix is publishing current, dated facts on crawlable URLs and retiring or redirecting old pages.

- Conflated brands. The model confuses you with a similarly-named company. The fix is disambiguation content, a clear "who we are and who we are not" statement with structured data.

- Hallucinated claims. The model fabricates a detail that was never true. The fix is a factual response page that addresses the claim directly in question-answer form.

- Sentiment distortion. The model overweights a handful of negative sources. The fix is broadening the source pool, not deleting the negatives.

The matrix above makes the response concrete. Each pattern has a specific content move. Treating all misinformation the same way, usually with a legal letter, works on none of them.

The Preemptive Playbook

Most enterprise brands wait for misinformation to appear and then scramble. The better approach is to build accurate signals before errors get locked in. This is defensive GEO.

- Publish a brand facts page. A single URL that states founding year, leadership, HQ, product categories, and any commonly confused brand names. Mark it up with Organization schema. This becomes a canonical source models cite.

- Create an authoritative about section. Separate pages for history, team, values, and key milestones. Together they produce a dense factual cluster that drowns out thin or wrong sources.

- Own the comparison narrative. If competitors publish misleading comparison pages, publish your own with accurate specs. Models prefer authoritative first-party sources over third-party opinion when the data is concrete.

- Refresh aggressively. Every fact page gets a visible updated date and gets reviewed quarterly. Models weight recency.

Monitoring Is Half the Job

You cannot correct what you do not catch. Run a standard set of 30 to 50 brand prompts monthly across ChatGPT, Perplexity, Gemini, and Copilot. Flag anything factually wrong, conflated, or sentiment-distorted. Our guide on tracking your brand across AI platforms covers the workflow. For regulated industries, this monitoring cadence is non-negotiable, our AI compliance guide explains why.

When To Escalate

Some misinformation does warrant direct escalation to the platform, specifically when it is:

- Defamatory or materially harmful to the business in a legally demonstrable way

- Consistently reproduced across multiple prompts despite source-layer corrections

- Attached to a specific model version that a platform can patch

Document the prompt, response, date, and model version, then use the platform's enterprise reporting channel. Pair it with the content fix, never instead of it.

For large brands facing systematic issues, our enterprise solution handles both the content layer and the platform relationships.

The Budget Question

A reasonable benchmark: if AI platforms influence more than 10% of your purchase research, treat misinformation response as a line item equivalent to traditional reputation management. That is a small team running weekly prompt monitoring, a content cadence for brand facts, and a designated owner for escalations. Waiting until misinformation is entrenched costs far more than prevention.

Run a free audit to see what AI platforms currently say about your brand.