AI Visibility Dashboard Template — A Reference Layout

Which four panels does an AI visibility dashboard actually need before the rest becomes noise that no executive ever opens twice?

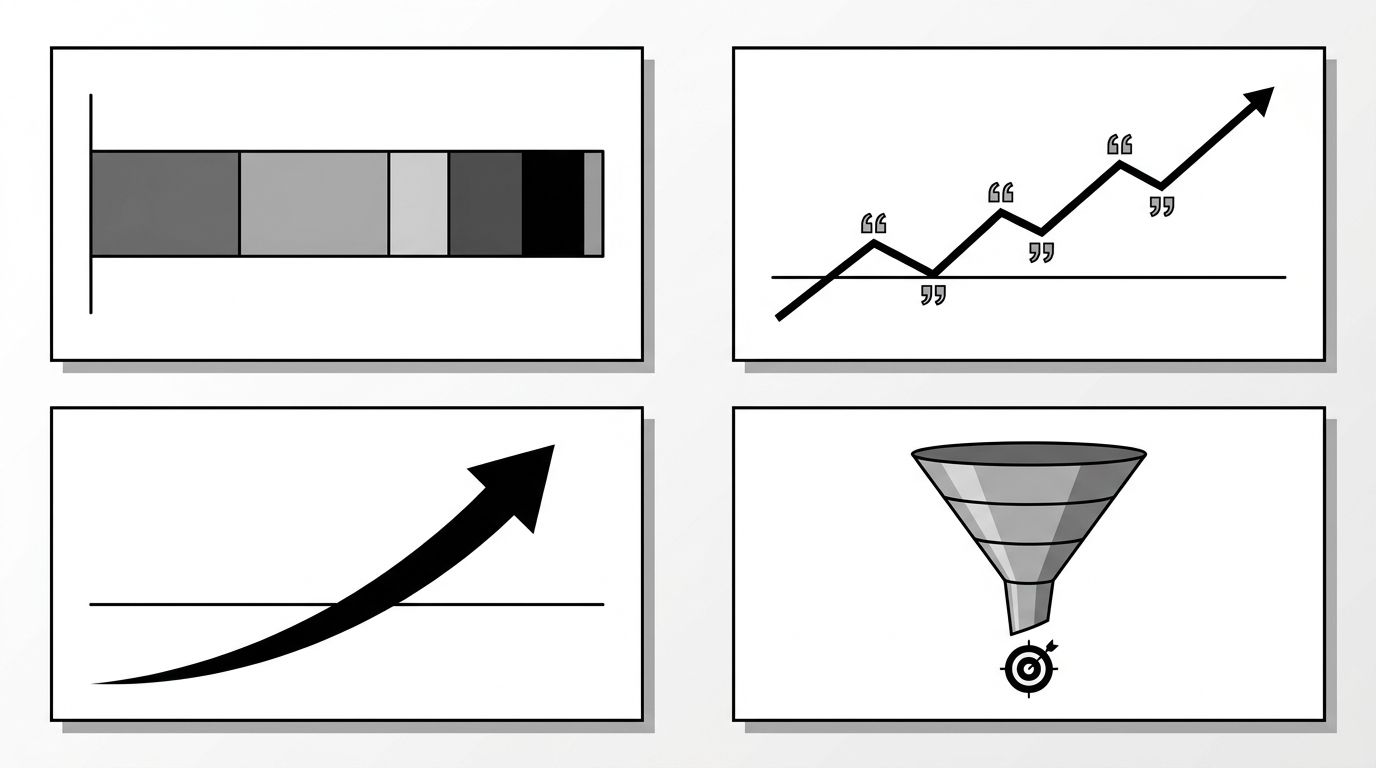

A useful AI visibility dashboard has four panels, not forty. The four are shortlist share, citation count, branded-search lift, and pipeline contribution. Everything else is noise. Most teams ship a 12-tile view with mention counts, sentiment splits, platform breakdowns, and three flavors of share metric, then watch nobody open it after week two. Strip it back to four panels and the dashboard starts driving decisions instead of decorating Notion pages.

The Four-Panel Rule

The constraint is the point. If you cannot defend why a fifth panel earns its place next to the four, it belongs on the operator view, where the analyst running the program needs the breakdown.

The image below shows the four-panel layout and the metrics that get cut.

Each panel answers a question your CFO will eventually ask:

- Are buyers seeing us when they ask AI for options?

- Is the underlying signal getting stronger or weaker?

- Is AI visibility crossing into demand we can attribute?

- Is any of this connected to revenue?

The hub guide on building an AI visibility dashboard covers the data plumbing underneath each panel. This post is about what the executive sees on top.

Panel 1: Shortlist Share

Shortlist share is the headline metric. For your priority query set (10 to 30 prompts real buyers ask), what percent name your brand in the recommended shortlist? Not mentioned anywhere in the answer. Named as one of the options the buyer should consider.

This is the closest analog to market share the AI surface gives you. Track it weekly across ChatGPT, Perplexity, Gemini, and Google AI Overviews as a single blended number, with platform breakouts on hover. Show the trendline over 12 weeks, current value, and delta versus the prior period.

One pitfall. Shortlist share moves slowly. A daily-refresh sparkline turns the panel into noise. Weekly resolution is the right cadence.

Panel 2: Citation Count

Citation count is the leading indicator. When AI responses cite your domain, the entity signal is strengthening. New citations show up two to six weeks before they translate into shortlist appearances.

What to show on the panel:

- Total citations across tracked queries this week

- Trendline over 12 weeks with delta versus the prior period

- Top 5 cited URLs

The URL breakdown tells the content team which pages are doing the work. If 80% of citations come from three pages, you know where to invest. If they spread across 40 pages, the entity signal is diffuse and you have a different problem.

For the relationship between citation rate and the OKR layer, the AI visibility KPIs and OKRs guide has the framing.

Panel 3: Branded-Search Lift

This is the panel most teams skip, and skipping it is why their AI program looks disconnected from anything the rest of marketing cares about. Branded-search lift is the week-over-week change in branded search volume that correlates with AI mention growth. When AI surfaces start naming your brand more, branded search rises within days, and branded search converts at three to ten times the rate of generic search.

Pull the data from Google Search Console (branded query volume) and overlay it with your shortlist share trend from Panel 1. Two lines on the same axis, normalized to 100 at the start of the tracking window.

If the lines diverge for more than three weeks, that is the alert. Either AI mentions are not translating to demand, or branded search is moving for reasons unrelated to AI. Either way the panel surfaces the question worth asking.

Panel 4: Pipeline Contribution

The why panel. AI-influenced pipeline is the dollar figure tied to opportunities where AI was a touchpoint. Two ways to attribute, both imperfect.

- Self-reported source. Add a "How did you hear about us?" field to demo requests with ChatGPT, Perplexity, Gemini, and AI Overviews as options. Crude but directionally honest.

- Referral plus branded-search modeling. Sum direct AI referral traffic plus the branded-search lift from Panel 3 in the same window. More accurate, more arguable.

Show both numbers with methodology in a tooltip. Quarterly resolution, not weekly. The CFO looks at this panel, and quarterly is the cadence finance thinks in.

What to Leave Off

The vanity metrics that look impressive and tell you nothing:

- Total mention count without context

- Sentiment scores (too noisy to trend)

- Platform-by-platform leaderboards on the headline view

- Prompt-coverage percentages

- Share-of-voice splits that double-count shortlist share

Each of these has a place in the operator view, where the person running the program needs the granularity. None belong on the executive surface. If a stakeholder asks for sentiment, show them where it lives in the operator view rather than cramming a fifth tile onto the executive page.

Ready to build the dashboard against live data? Geology's GEO optimization service sets up the four-panel layout, wires the sources, and trains the team that runs it.