Compliance Risks of Generative AI for Finance Marketing

What are the AI compliance risks finance marketing teams miss when they only review the content their own brand publishes?

Most finance marketing teams treat AI compliance as a content-review problem. They run AI-generated drafts through legal, scrub for unsupported claims, and call the surface covered. The bigger risk sits outside that loop. It is what AI says about your products without you in the room: an outdated APY a model still quotes, a checking account misclassified as a money-market product, or a casual "this card is great for first-time buyers" framed as if a regulated representative said it. Reactive content review cannot catch these because your brand never produced the content. The AI did, citing your old pages, third-party reviews, or training data your compliance team has never seen.

This is the compliance surface our cluster post on AI compliance for regulated industries frames at the strategic level. What follows is the operating playbook for finance marketing teams.

The Two Compliance Surfaces: Outbound Content vs AI-Generated Answers

Finance marketing teams have always managed one surface: outbound content. Ads, landing pages, emails, disclosures. Every asset has a reviewer, a version, and a paper trail.

Generative AI introduces a second surface almost no team monitors: AI-generated answers about your products. When a prospect asks ChatGPT about high-yield savings, the model produces a paragraph that names you, quotes a rate, and characterizes the product. None of it touched your CMS or went through legal. A customer reads it and acts on it.

The legal question of whether you are liable for that answer is unsettled. The practical one is not. If a regulator opens an inquiry into whether consumers were misled, "ChatGPT said it, not us" is a thin defense, especially when the AI is quoting content you published five years ago.

The Five High-Risk Patterns in Finance AI Responses

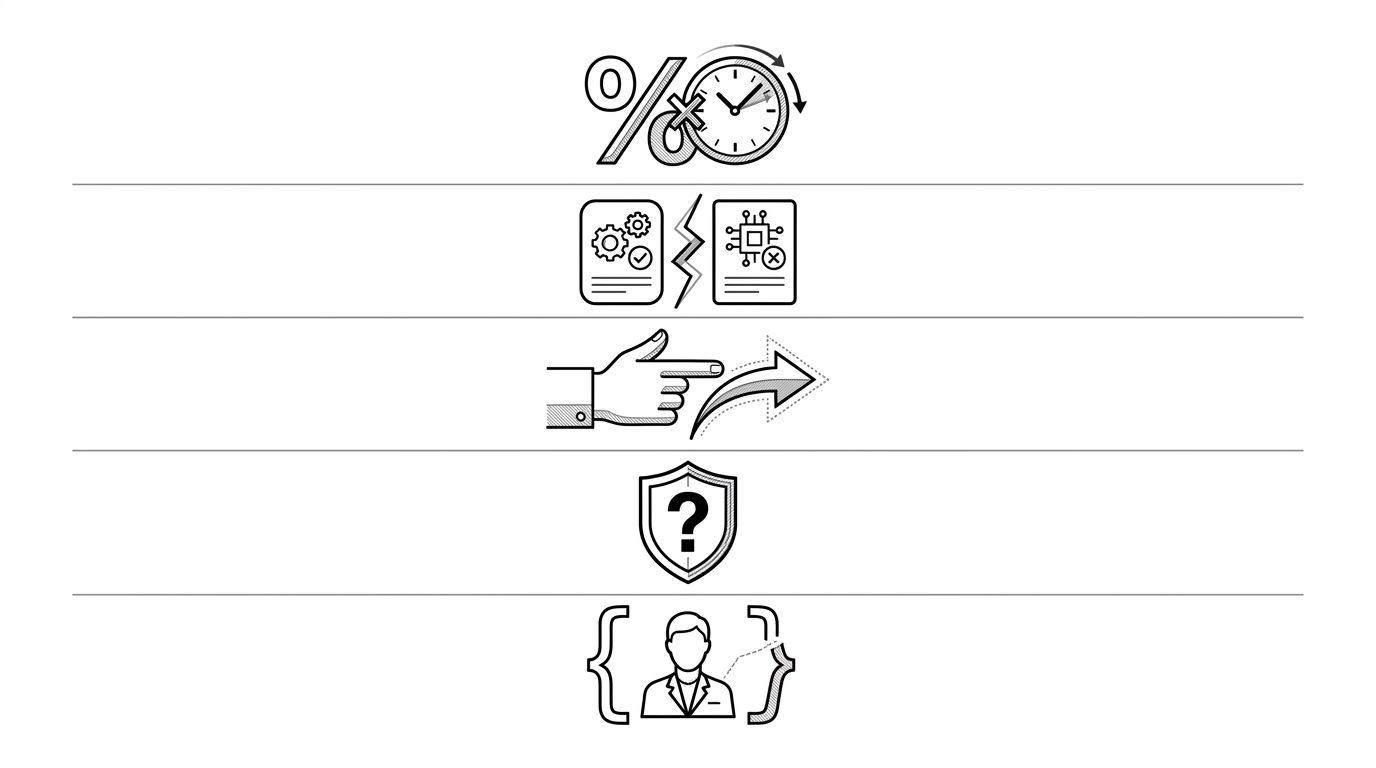

Across audits of bank, fintech, and wealth-management brands, five patterns repeat. The image below summarizes them.

- Stale rate quotes. AI states an APY, mortgage rate, or APR pulled from an older blog post or press release. The current rate is different. The customer is misled.

- Product misclassification. A high-yield savings account gets described as money-market. A secured card gets described as a credit-builder loan. The category mismatch carries different disclosures and protections.

- Implied recommendations. AI writes "this card is a good fit if you travel often" or "this account is best for first-time investors." For a regulated product, advice-flavored language without suitability context is a problem under FINRA Rule 2111 and similar frameworks.

- Fabricated guarantees. Models infer guarantees that do not exist, such as a "0% intro APR for 24 months" when the offer was 18, or coverage limits that were never offered.

- Buyer suitability mismatches. AI recommends a complex product (margin account, structured note, variable annuity) to a profile that would fail any suitability screen if a human advisor saw it.

Each pattern has a different fix path. Stale rates are a freshness problem. Misclassification is a schema and entity-disambiguation problem. Implied recommendations need explicit framing in the source content. Suitability mismatches need both content fixes and active monitoring.

What FINRA, SEC, and CFPB Rules Actually Say

Regulator language has not caught up to AI-generated content specifically, but existing rules already cover most of the risk.

- FINRA Rule 2210 requires public communications to be fair, balanced, and not misleading. Notice 24-09 confirmed the rule applies to AI-generated communications a member firm distributes. The open question is what counts as "distributed" when an AI quotes your archived content.

- SEC Marketing Rule 206(4)-1 for investment advisers prohibits untrue statements and misleading implications. The 2021 update is being read broadly enough to capture AI-mediated communications about adviser services.

- CFPB UDAAP authority covers unfair, deceptive, or abusive acts. A consumer harmed by an AI quoting an outdated APY is a candidate UDAAP claim, especially if the misinformation was foreseeable and you took no steps to correct the public record.

The defensible position is not "we did not say that." It is "we have a documented program for monitoring and correcting AI-generated representations of our products." Regulators reward programs.

A Monthly Compliance-Review Workflow

A workable cadence has four steps and does not require a new team. It is a redirection of effort already in place for outbound review.

- Sample the prompt set. Pull 30 to 50 prompts a real customer would ask about each regulated product line. Run them across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Capture the answers.

- Triage by pattern. Tag each answer against the five patterns above. Stale-rate and misclassification cases are highest priority because they are the easiest for a regulator to identify.

- Trace the source. For each flagged answer, identify the content the AI is drawing from: your archive, a third-party site, a review aggregator. Source determines fix path.

- Fix and republish. Update the source content, refresh schema with explicit dates, push corrections to high-authority third-party sources. Document what was fixed and when.

The same fixes that close compliance gaps also strengthen how AI describes your products. The trust-signal logic for that lift is in our companion post, GEO for financial services.

If you run marketing or compliance for a bank, broker-dealer, fintech, or wealth manager, scope the second surface this quarter. Pick one product line. Pull 50 prompts. See what AI says. The first audit is almost always uncomfortable, and that discomfort is the data you need to brief leadership. For enterprise teams running this across multiple regulated product lines, our enterprise solution page covers the program model.