Technical GEO vs Technical SEO, What Overlaps and What's New

Which parts of your existing technical SEO setup already cover GEO, and which new layer is actually worth the rebuild effort?

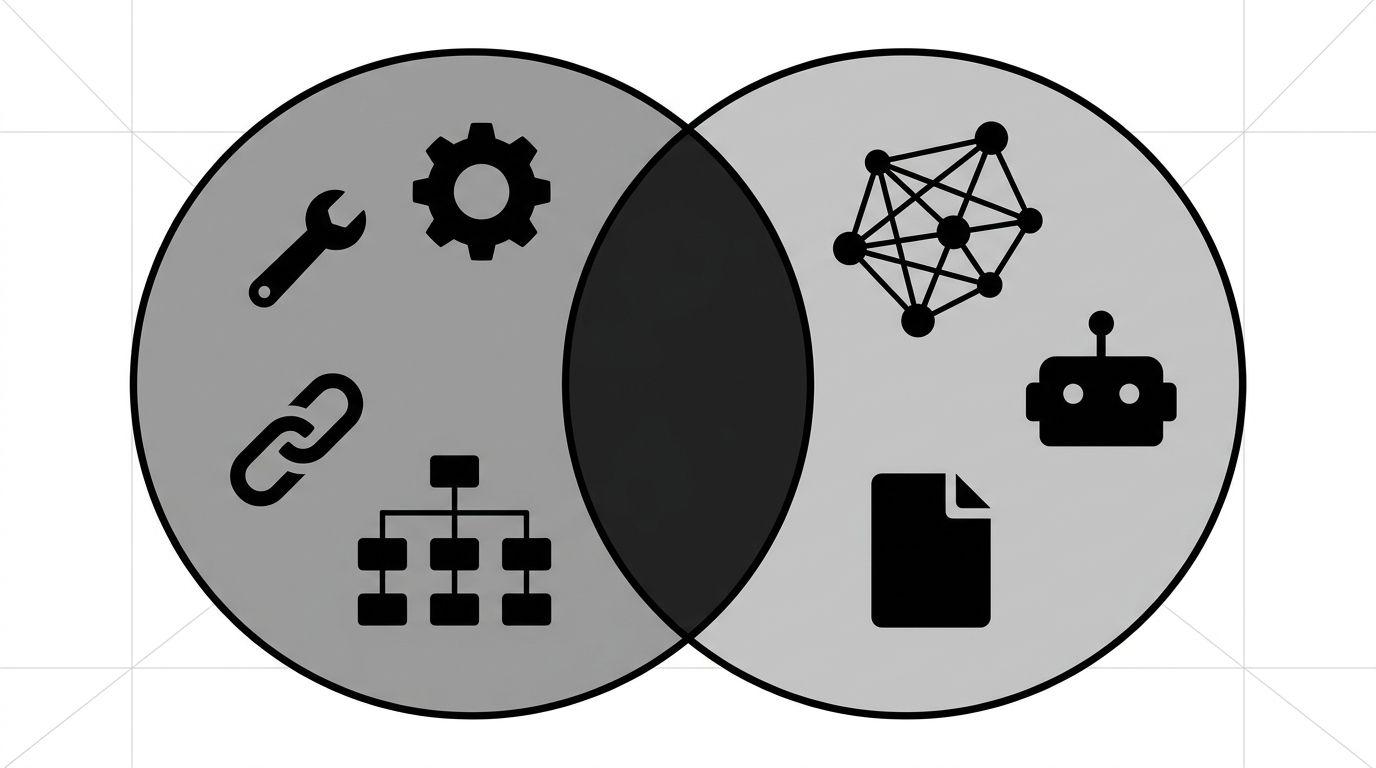

About 70% of technical SEO carries over to GEO unchanged. Schema, sitemaps, crawl access, server-side rendering, page speed, canonical URLs, all of it does the same job for AI retrieval that it did for Googlebot. The 30% that's new is where teams spend 100% of their time: llms.txt, citation-friendly content structure, AI-specific feeds. Knowing the overlap saves the rebuild.

The mistake is treating GEO as a separate technical program. It isn't. It's a small extension on top of a working setup. If your foundation is solid, GEO is a few weeks of focused additions, not a six-month rearchitecture. The technical GEO guide covers the implementation playbook. This post answers the upstream question: what do you keep, add, and stop spending on.

The overlap, what already works

The crawl infrastructure that lets Googlebot read your site is what GPTBot, PerplexityBot, ClaudeBot, and Google-Extended use too. The schema you wrote for rich results is what AI models parse for entity relationships.

The pieces that carry over with no changes:

- Server-side rendering. AI crawlers don't execute JavaScript reliably, so SSR or SSG is the right call for both channels.

- JSON-LD schema (Organization, Product, FAQPage, Article). AI models read these as first-class entity signals.

- XML sitemaps with accurate lastmod dates. Retrieval-based AI systems use freshness signals like Googlebot does.

- Canonical URLs. Duplicate content confuses ranking algorithms and AI training pipelines alike.

- HTTP/2, edge caching, Brotli compression, sub-200ms TTFB. Crawl budget logic applies to AI bots too.

- Clean heading hierarchy and semantic HTML. The DOM tree that earns rich results is what AI extractors parse.

The framing change is authority. SEO weighs domain authority through backlinks; GEO weighs entity authority through citations across the open web. Same mechanics, different interpretation. For the broader strategic comparison, see GEO vs SEO.

The new layer, what GEO adds

This is the 30% that doesn't exist in a pure technical SEO playbook, and the 30% that delivers the lift, because most competitors haven't shipped it yet.

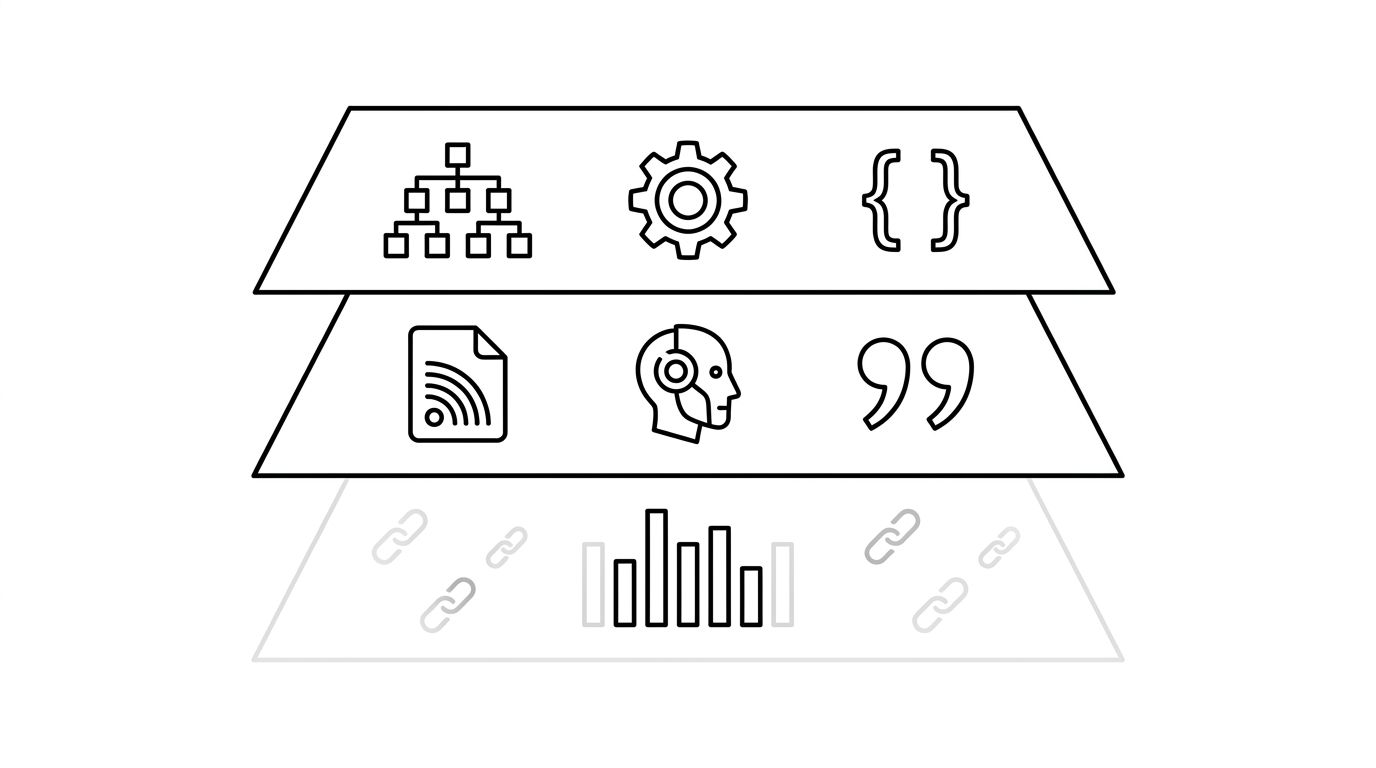

The diagram below shows the three layers stacked: shared foundation at the bottom, new GEO additions in the middle, deprioritized signals on top.

The new layer has four pieces:

- llms.txt. A machine-readable index pointing AI crawlers at your most citable pages. Robots.txt tells bots what they can read; llms.txt tells them what is worth reading.

- Citation-friendly content structure. AI retrieval extracts short, self-contained passages. Pages that bury the answer get skipped. Pages that lead with a direct factual statement get cited.

- AI-specific content feeds. A feed of your highest-authority pages (research, benchmarks, FAQ databases, product specs) for AI ingestion pipelines.

- Bot-specific access decisions. Robots.txt entries for GPTBot, PerplexityBot, ChatGPT-User, Google-Extended, ClaudeBot, and CCBot, with intentional choices about training versus retrieval.

Four items. None require a CMS migration or rendering changes. These are configuration and content-structure choices, which is why a technical SEO team can ship them in weeks.

The deprioritized layer, what's now lower-priority

Some technical SEO work still produces ranking value but does little for AI visibility. Recognizing this frees up time for the new-layer work.

- Keyword density and exact-match optimization. AI models parse entities, not keyword frequency.

- Internal linking purely for link equity. PageRank-style flow still matters in SEO, but for GEO the value is in the entity-relationship signal the link carries.

- Core Web Vitals as a primary lever. CWV matters as a baseline, but going from 92 to 98 PageSpeed moves less than shipping llms.txt does.

- Featured-snippet optimization. AI Overviews and direct AI answers are eating that surface. Optimize for citation, not the snippet box.

None are abandoned. They are just not where the next engineering hour goes.

A staged migration path for technical SEO teams

For a team moving from technical-SEO-only to a combined practice, sequencing matters.

- Audit the overlap. Confirm SSR, schema, sitemaps, canonicals, and speed are healthy. GEO can't help a broken foundation.

- Open AI bot access intentionally. Add explicit robots.txt entries for the major AI crawlers and decide per bot: training, retrieval, both, or neither.

- Ship llms.txt. The lowest-effort, highest-impact addition.

- Restructure your top 20 pages for citation. Lead-with-the-answer rewrites on highest-traffic content.

- Add an AI-specific feed.

- Measure visibility, not rankings. Brand mention rate, sentiment, and shortlist position across the major AI platforms.

Steps one through three fit in a sprint. Four and five run through the content workflow. Step six is the operating-model change, the one worth the most attention. For help running the technical pieces alongside your SEO program, our technical SEO service is built for this combined audit.

Technical GEO is a small extension: four new artifacts and one new content discipline. Teams with the foundation in place are weeks away from AI visibility. Teams without it should fix that first.