Insurance Brand Reputation in AI — Regulator-Safe Practices

Which content patterns let insurance brands earn AI citations under state DOI scrutiny without inflating claims or going silent?

Insurance brand reputation in AI lives or dies on three signals: claims-handling reputation, product-language consistency, and third-party review presence. State Departments of Insurance (DOI) constrain how carriers and brokers talk about each, but the constraints are about claims (no inflated reputation language, no comparative superlatives, no implied guarantees), not silence. The carriers winning ChatGPT and Perplexity citations are the ones publishing the most regulator-safe but still specific content. The ones treating DOI rules as a reason to publish nothing are watching aggregators, lead-gen sites, and a handful of national brands answer questions about their own products.

This post is the practitioner companion to the AI compliance hub for regulated industries. The hub frames the macro risk; here the focus is the day-to-day choices an insurance marketer makes when shipping AI-targeted content under state DOI scrutiny.

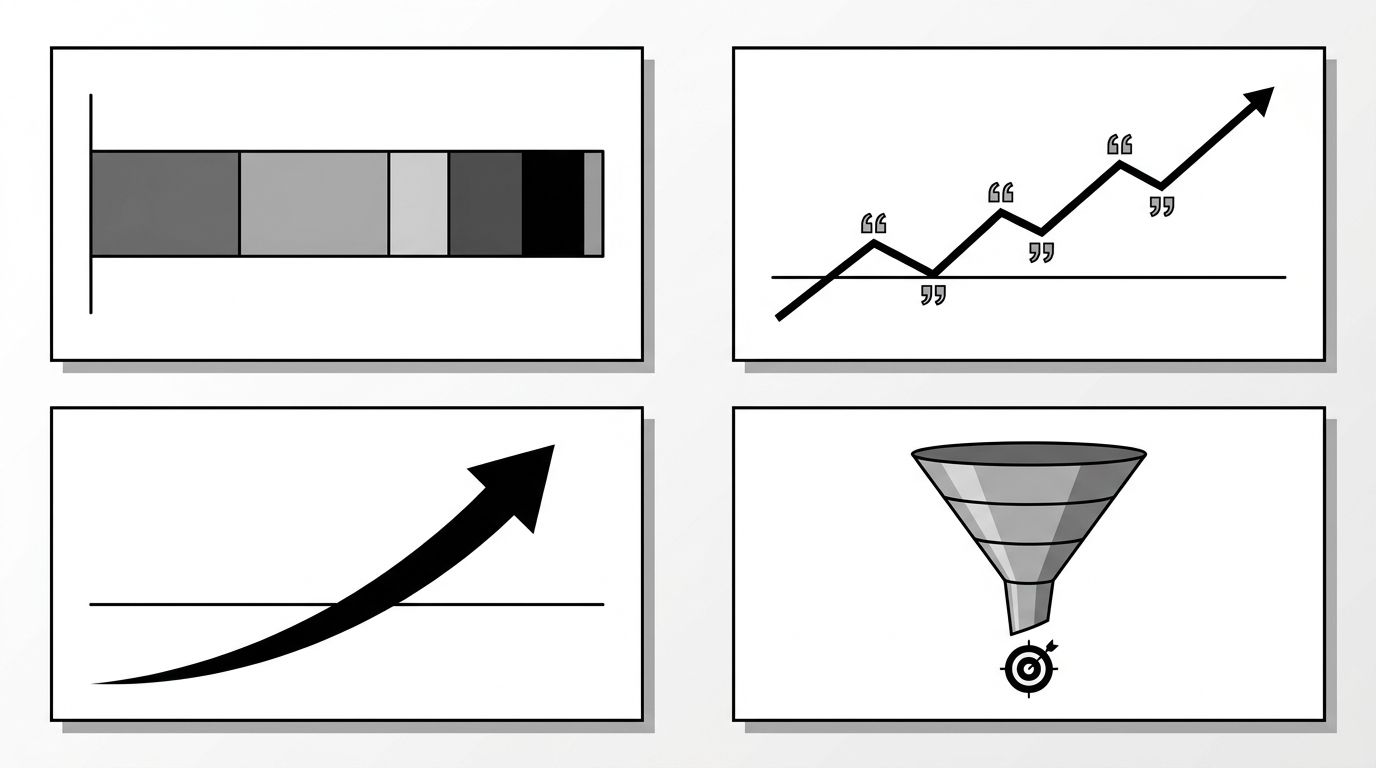

The three reputation signals AI weighs most for insurance brands

When ChatGPT, Perplexity, or Google AI Overviews answer a question like "is [carrier] good for restaurant insurance," the model is not running a rating algorithm. It is reading patterns across the corpus and surfacing what the structured signal says. Three signals dominate that pattern:

- Claims-handling reputation. AI models read NAIC complaint indexes, J.D. Power studies, BBB summaries, and recent press coverage. A brand with a clean complaint trend and specific, dated public commentary on its claims process gets cited. A brand with neither gets summarized from forum posts.

- Product-language consistency. When the same product name appears with the same scope across the carrier site, the agent network, partner publications, and review platforms, the AI treats it as a confident entity. Mixed language ("BOP," "business owner's policy," "small business package") across sources fragments the entity and dilutes citation odds.

- Third-party review presence. Trustpilot, Google Business Profile reviews, and vertical platforms like Insurify and The Zebra act as ground-truth checks. AI models cross-reference what your brand says about itself against what reviewers say. Carriers absent from those platforms read as low-confidence sources to the model.

The image below shows how the three signals stack into a single reputation surface that AI models read.

State DOI constraints: what you can and can't say

State Departments of Insurance regulate the marketing of insurance products, not the existence of marketing content. The most cited DOI restrictions across major states (California, New York, Texas, Florida, Illinois) cluster around a few specific prohibitions: unfair comparative claims, unsubstantiated superiority statements, undisclosed limitations, and references to ratings or rankings without the source and date.

That set is narrower than most marketing teams treat it. The list of things a carrier can publish, with proper review, is long: dated NAIC complaint-index numbers, specific average claims-cycle days, named third-party ratings with citation, product-feature comparisons that stay factual, and process documentation for filing a claim. The mistake is conflating the prohibition on inflated language with a prohibition on specifics. Specifics are what AI models cite. Vague language gets summarized away.

A useful internal test: would the sentence pass a state filing review if it appeared in a printed brochure? If yes, it is safe to publish. If the sentence depends on words like "best," "leading," or "fastest," rewrite it with a number or a dated source.

Specific-but-safe content patterns that earn AI citations

Four patterns hold up across DOI reviews and earn AI citations consistently. None of them require new claims; they require structuring claims you can already make.

- Process documentation with timing. Publish the actual steps and target windows for filing and resolving a claim. "First-notice acknowledgment within one business day, adjuster contact within three" is regulator-safe and AI-citable. Vague reassurances about responsiveness are not.

- Product-scope tables. A clear table of what each product covers, what it excludes, and which states it is licensed in. AI models extract structured comparisons better than narrative coverage descriptions, and the table format makes the disclosure surface explicit for compliance.

- Dated third-party citations. When you reference a J.D. Power score, NAIC complaint index, or AM Best rating, name the source, the year, and the methodology link. AI models treat dated citations as higher-confidence than undated ones, and DOI reviewers treat them as compliant by default.

- Use-case explainers. Long-form content explaining when a coverage applies and when it does not, written for a small-business owner rather than an underwriter. The Geology insurance case study shows a California broker using exactly this pattern to capture ChatGPT citations for med spa and restaurant insurance segments inside four weeks.

What does not work: generic "why choose us" pages, founder narratives, or testimonial walls without verifiable third-party context. Those pass legal review but contribute almost nothing to the AI citation surface because they carry no extractable fact.

Working with internal legal to ship faster

The bottleneck for most insurance content programs is not regulation; it is review cycle time. A carrier with weekly compliance review and an annual filing calendar will lose to a broker with daily review and a documented review playbook. Two changes consistently cut cycle time without weakening compliance:

- Pre-approved language libraries. Maintain a shared bank of product descriptions, disclosures, and claims-process language that compliance has already cleared. Writers compose from the library; review focuses only on the new sentences. This approach also reinforces the product-language consistency signal AI models read.

- Risk-tiered review. Not every page needs the same scrutiny. A factual FAQ about deductibles is a different risk profile than a comparative landing page. Tier the review process so low-risk content ships in 48 hours and only high-risk content goes through full filing review.

For larger carriers, the cost of slow review compounds as competitor citations. Every week a regulator-safe answer is not on your site is a week aggregators and rivals get cited instead. If you run a multi-state book and need to scale this discipline, the enterprise solution handles the workflow side: visibility tracking by state, citation monitoring by product line, and review pipelines built around the constraints insurance teams actually work under.