Review Signals and AI: How Customer Reviews Influence AI Recommendations

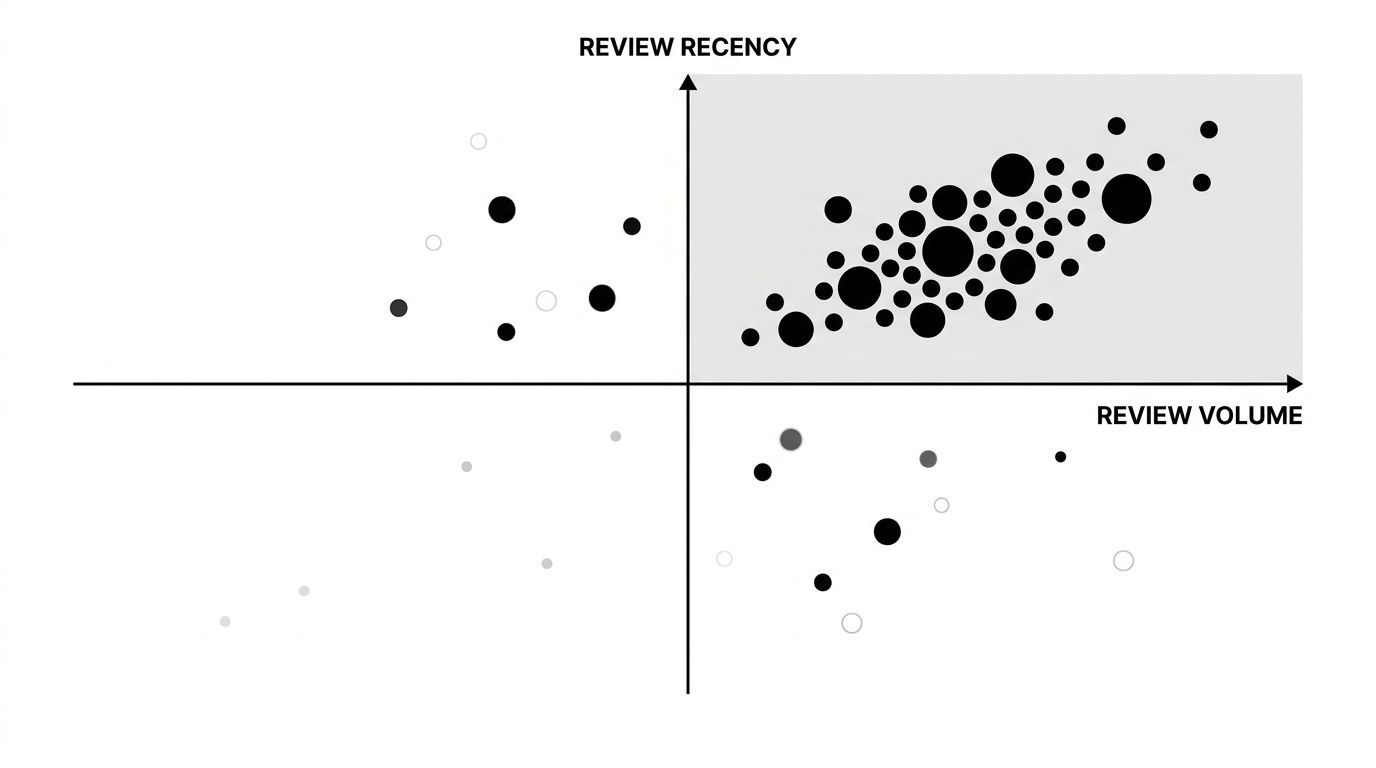

Review recency beats review volume when AI models decide which brand to recommend. In side-by-side prompt tests across ChatGPT, Perplexity, and Gemini, brands with 120 reviews from the last six months consistently outranked brands with 4,000 reviews where the median date was 2022. This flips the assumption most teams operate on, that more reviews equal more visibility. If your review program is tracking total count instead of rolling 180-day velocity, you are optimizing the wrong metric for AI recommendations.

Why Recency Outweighs Volume

Large language models care about what they can ingest and verify. A review from March 2026 is a crawlable, dated signal that a customer is currently using your product. A review from 2021 does not tell the model the product still exists, still works, or still solves the problem being asked about. Retrieval-augmented responses in Perplexity and Google AI Overviews explicitly favor recent source documents.

There is also a training-data effect. When models are fine-tuned on fresh web crawls, the most recently indexed review content carries more weight in associative patterns. Old reviews on legacy domains look like archived data, not current customer sentiment.

The Three Review Signals AI Models Actually Read

Not every review field matters equally. From the data we track across client audits:

- Review text length and specificity: A 180-word review naming the exact use case is cited more often than a five-star rating with no text. Models need extractable claims.

- Platform authority: G2, Capterra, Trustpilot, and Amazon get weighted more than unknown review aggregators. Domain reputation transfers.

- Response ratio: Brands that publicly respond to 40%+ of reviews, including negatives, show up more often in AI brand summaries. Response activity is a trust signal.

The quadrant above shows where AI mentions cluster in our dataset. High recency with moderate volume wins over high volume with low recency, every time. Chase the top-right, not the right edge.

What To Do This Quarter

You do not need to restart your review program, you need to re-point it.

- Audit your 180-day review velocity. Count reviews published in the last six months across every platform. If you are under 20 per month and you are in a competitive category, you are invisible to AI.

- Prioritize platforms models already crawl. G2 and Trustpilot show up in ChatGPT citations. Smaller industry-specific review sites rarely do. Shift investment toward where AI actually looks.

- Request reviews with specifics. A prompt like "what problem did our product solve for you last month" produces better AI-useful text than "rate your experience." Specificity is what gets quoted.

- Respond publicly, especially to critical reviews. This is the signal most brands miss. Models treat response activity as active brand stewardship.

The Negative Review Angle Nobody Talks About

Burying bad reviews hurts AI visibility. When an AI model summarizes your brand and finds only positive reviews, it either flags the pattern as suspicious or pulls criticism from Reddit and forum threads you do not control. A handful of thoughtful negative reviews, with substantive public responses from your team, gives models a balanced signal and keeps the narrative on platforms you can influence. Our write-up on AI sentiment monitoring covers how to read this mix.

Measuring the Lift

Before you retool your review program, baseline your current AI visibility. Run 20 to 30 category-defining prompts in ChatGPT and Perplexity, note which brands are mentioned, and rerun the same prompts 90 days after your recency push. If your brand shifts from unmentioned to cited, review velocity is working. If not, the bottleneck is elsewhere, likely product page optimization or thin third-party coverage.

For e-commerce brands especially, review signals are one of the fastest levers to move. Our e-commerce AI shopping guide goes deeper on how product-level reviews feed AI shopping assistants. And if you want to see which review platforms AI models are currently pulling from for your category, our GEO optimization service maps the exact citation sources. Volume is a vanity metric. Velocity is the one AI models actually weigh.