Site Speed, Core Web Vitals, and AI Crawlability

Which site speed metrics actually matter for AI crawlers, and why can a 95 PageSpeed score still leave your pages invisible to GPTBot?

Core Web Vitals were designed for human users. AI crawlers do not care about your Largest Contentful Paint, your Cumulative Layout Shift, or your Interaction to Next Paint in the way your Googlebot ranking assumes. What they care about is Time To First Byte, crawl budget, and whether your page renders critical content in the initial HTML payload. That is where most "fast" sites quietly fail. A page that scores 95 on PageSpeed Insights can still be invisible to GPTBot if the answer lives inside a JavaScript-rendered component that loads after first paint. Stop optimizing for Lighthouse and start optimizing for what the bots actually see.

What AI Crawlers Actually Measure

Most AI crawlers (GPTBot, PerplexityBot, ClaudeBot, Google-Extended) run on tighter timeouts than human-oriented crawlers. They fetch the HTML, look at response size and content density, and render JavaScript minimally or not at all. Our piece on how AI models crawl web content covers the behavior.

If your core content depends on hydration or a client-side fetch, the bot misses it. Your SEO score looks clean because Googlebot handles JS better than most AI crawlers. Your AI visibility is quietly terrible.

The Four Metrics That Matter

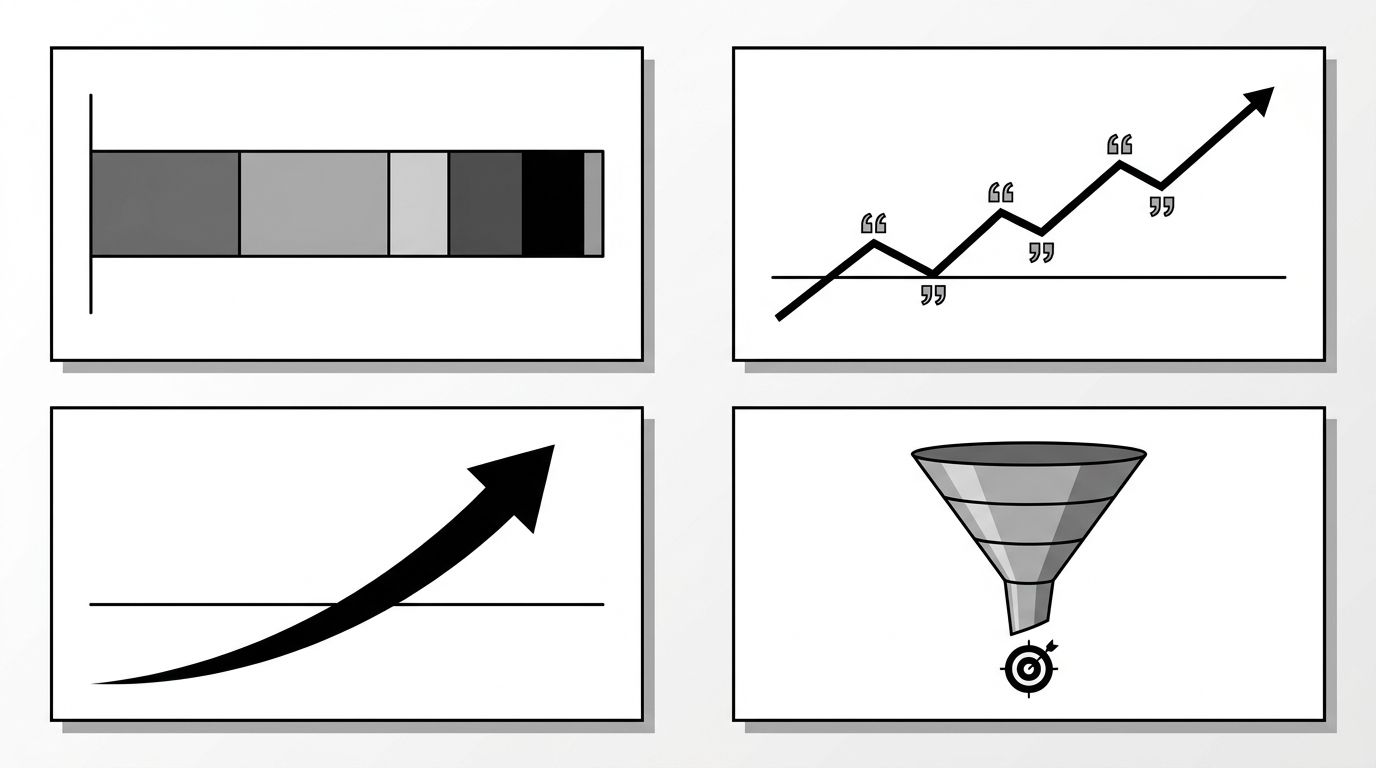

Forget the consumer-facing Core Web Vitals for a moment. The four signals AI retrieval systems actually weight are:

- Time To First Byte (TTFB). How fast your server responds. Below 400ms is the bar, below 200ms is safe. Slow TTFB gets your page deprioritized or skipped during crawl cycles.

- Initial HTML payload completeness. Whether the main content, headings, and structured data appear in the HTML before any JavaScript runs. View source in a private window with JS disabled. If the answer is not there, the bot is not seeing it.

- Response size and density. Oversized HTML (2MB+ pages) and content buried under script and style tags reduce extraction quality. Keep critical content in the first 100KB.

- Crawl budget efficiency. How many of your URLs the bot successfully fetches per session. Broken internal links, redirect chains, and 5xx errors burn budget and reduce indexed depth.

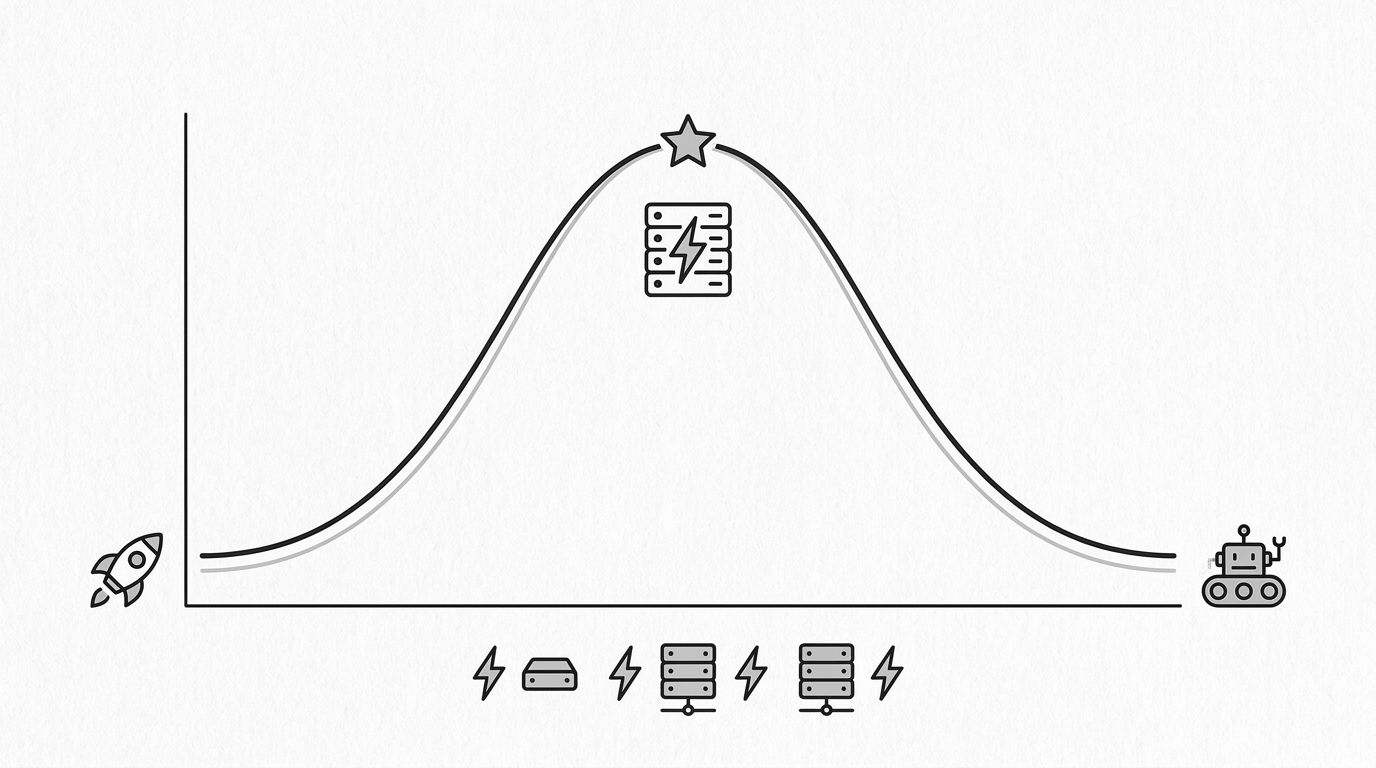

The curve above shows why extreme client-side performance optimization can hurt AI crawlability. A lean, fast, server-rendered page sits in the sweet spot. A heavily client-rendered SPA with perfect Lighthouse scores can sit outside it.

Where Core Web Vitals Still Help

Core Web Vitals are not useless for GEO. They correlate indirectly. LCP pressure encourages SSR and image optimization, which helps AI bots. CLS pressure reduces layout thrashing that can confuse extraction. INP is mostly irrelevant to AI crawlers but matters for human users who still need to convert after they find you.

The mistake is treating Core Web Vitals as the whole picture. They are a subset, not the full set. We unpack the broader gap in technical GEO vs technical SEO -- the two disciplines overlap on speed but diverge sharply on rendering, crawler access, and structured-data priority.

The Server-Side Rendering Tradeoff

React, Next.js, and similar frameworks can render server-side, client-side, or hybrid. The hybrid pattern (streaming SSR with hydration) is the most common and the most likely to fail AI crawlability silently.

If your page uses client-side fetching for the main content block, a non-JS AI crawler sees a shell. Options: full SSR for content-critical pages, static generation for pages that do not need per-request data, or streaming SSR with critical content inlined so the answer is in the first payload.

Our technical SEO service audits sites for this bot-visibility gap, not just human-facing speed metrics.

Practical Fixes That Move AI Citations

Run these checks on your ten highest-priority pages. Any failure is a likely cause of low AI citation rates. For the full sweep, our technical GEO checklist walks through every infrastructure check in sequence.

- Disable JS and view source. Is the main content in the HTML? If not, ship SSR for that route.

- Check TTFB from multiple regions. A misconfigured CDN can make one region 600ms+ while your home region is 150ms.

- Audit robots.txt and sitemap. AI crawler user agents allowed, sitemap reflects current URLs.

- Compress HTML response size. Remove inline scripts and styles that push content below the first 100KB.

- Fix redirect chains. One 301 is fine, three hops burn crawl budget.

Our guide on technical GEO goes deeper on crawl infrastructure.

The Measurement Loop

Measure AI citation rate before and after technical changes. If you retrofit SSR on a page and Perplexity citations lift within two to three weeks, you have confirmed the bottleneck. If they do not, the issue was content or authority.

Most teams skip this and attribute any lift to the most recent change. Run a small A/B across comparable pages to separate content effects from technical effects.

Where to Start

If your stack is modern JS-heavy and your AI citation rate is below your SEO visibility, technical crawlability is almost certainly the bottleneck. Start with SSR on your highest-intent pages, then measure. If you want a second pair of eyes on your site's bot-visibility profile, run a free audit and we will flag the gaps.