Crisis Management in the Age of AI: When AI Amplifies Bad Press

When a crisis breaks, your communications team has roughly 48 hours before AI models lock the framing into their retrieval index. Traditional PR playbooks assume journalists are the amplifiers, so the response centers on press releases, executive statements, and media briefings. That playbook no longer covers the actual amplifier. ChatGPT, Perplexity, and Gemini now summarize incidents for millions of users within a day, and whichever version of events dominates the indexed web during that window becomes the answer people get for months. Crisis management in AI is less about controlling the narrative and more about controlling the sources AI models pull from.

Why the First 48 Hours Matter More Than They Used To

Before AI summarization, a crisis story decayed as new coverage pushed it off the front page. Now the story gets compressed into a paragraph and served on demand for years. If a reporter's first draft contained an error, that error is the one AI repeats. If the first 20 articles frame the story as a scandal, AI defaults to "scandal" even after facts shift.

Retrieval-based models weight recency and volume. During a breaking event, you get both working against you. Every outlet republishing a wire story counts as a separate source, and models treat that repetition as consensus. Our piece on how AI models choose which brands to mention explains the weighting logic.

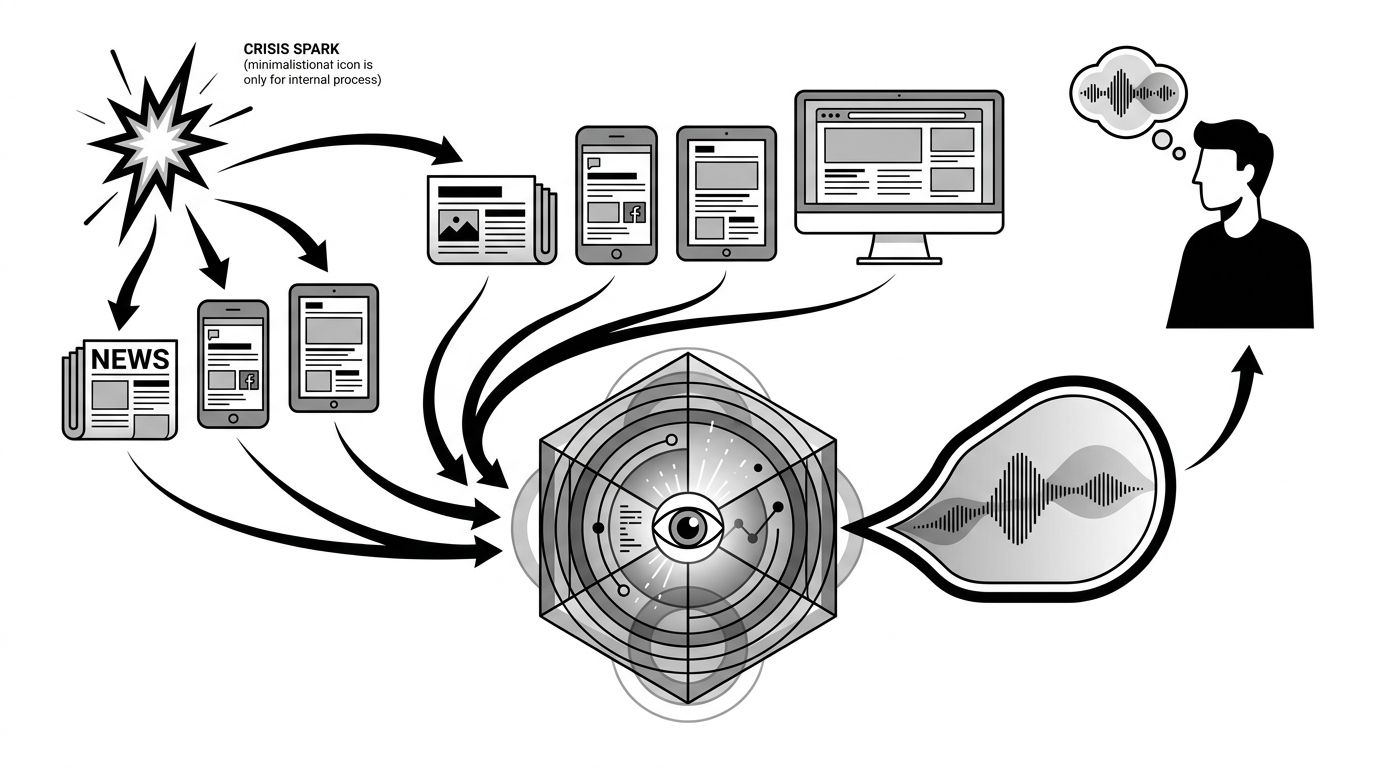

What a Crisis Looks Like Inside an AI Model

You cannot respond to something you have not measured. Run a structured prompt set against ChatGPT, Perplexity, Gemini, and Copilot within four hours of any incident. Capture the top three framings each model uses, which sources get cited by name, whether your official statement appears, and sentiment drift from your pre-crisis baseline.

This gives you a source map. Your response targets the sources that show up in AI answers, not the ones that feel most important in your war room.

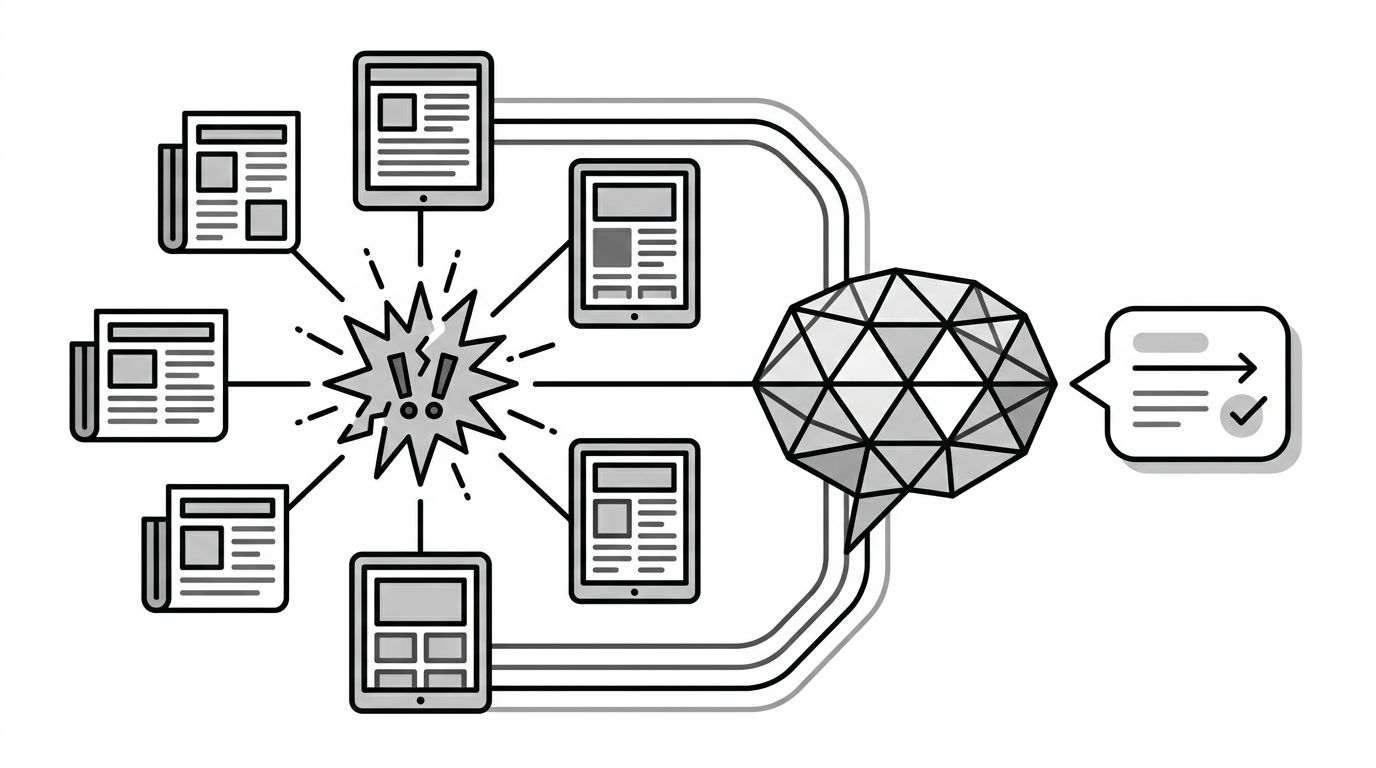

The diagram above shows the amplification loop. One event produces many sources, which the AI collapses into one dominant narrative. Your intervention has to happen at the source layer, not the output layer.

The Three-Track Response

Most brands run a single-track crisis response. In AI environments, you need three parallel tracks.

Track 1: Official Record on Owned Properties

Publish a dated, factual incident page on your own domain within hours. Not a press release on a separate newsroom subdomain, the primary domain itself. State what happened, what you did, and what changed. Mark it with Article schema. This becomes the citable first-party source AI models prefer when they exist.

Track 2: Third-Party Corrections

Reach the outlets that published first, not the ones with the biggest reach. First-published articles get indexed first and get more backlinks. Correct factual errors in those specific pieces. Our guide on tracking AI citations covers how to identify which pages models are actually pulling from.

Track 3: Counter-Narrative Volume

A single correction does not outweigh 40 articles repeating the original frame. You need accurate, varied, authoritative content in the same topic cluster. Customer testimonials, expert commentary, explainer content. This resets the baseline over four to twelve weeks.

What Not to Do

Do not rely on platform takedown requests. OpenAI, Anthropic, and Google have reporting channels, but they are built for copyright and policy issues, not factual disputes. Even successful requests apply to one model version.

Do not delete content that contains the error. If an article misquotes you, asking for a correction preserves the URL and its link equity. Deletion erases the citation you can later correct.

Do not flood the zone with generic reassurance. AI models weight specificity. "We take this seriously" disappears into noise. Specific statements with dates, names, and actions get cited.

Building a Pre-Crisis Position

Brands that handle AI-amplified crises well built their source layer before anything went wrong. This is defensive GEO. Our enterprise solution focuses on systematic source control, and our insurance case study shows what this looks like in a regulated industry.

Pre-crisis work includes a brand facts page with Organization schema, executive bios with Person schema, a regularly updated transparency page, dated policy documents, and relationships with independent experts who can comment during a crisis. These assets give models authoritative first-party context to weigh against breaking news.

If AI platforms influence even 5% of your buyer research, crisis response deserves a dedicated line item. The alternative is watching AI models cite the wrong framing for the next six quarters.