Multimodal AI: How Images, Video, and Audio Shape AI Recommendations

Do AI models actually read your images, videos, and alt text, and how are those signals changing which brands they recommend?

AI models already weight your images, video transcripts, and audio captions as first-class brand signals. Most brands still treat them as SEO afterthoughts. That gap is becoming the cheapest arbitrage in GEO: multimodal content is under-optimized, the scoring methods are public, and the same asset that gets ignored on a Google image search might be the thing that decides whether GPT-4 mentions your brand at all.

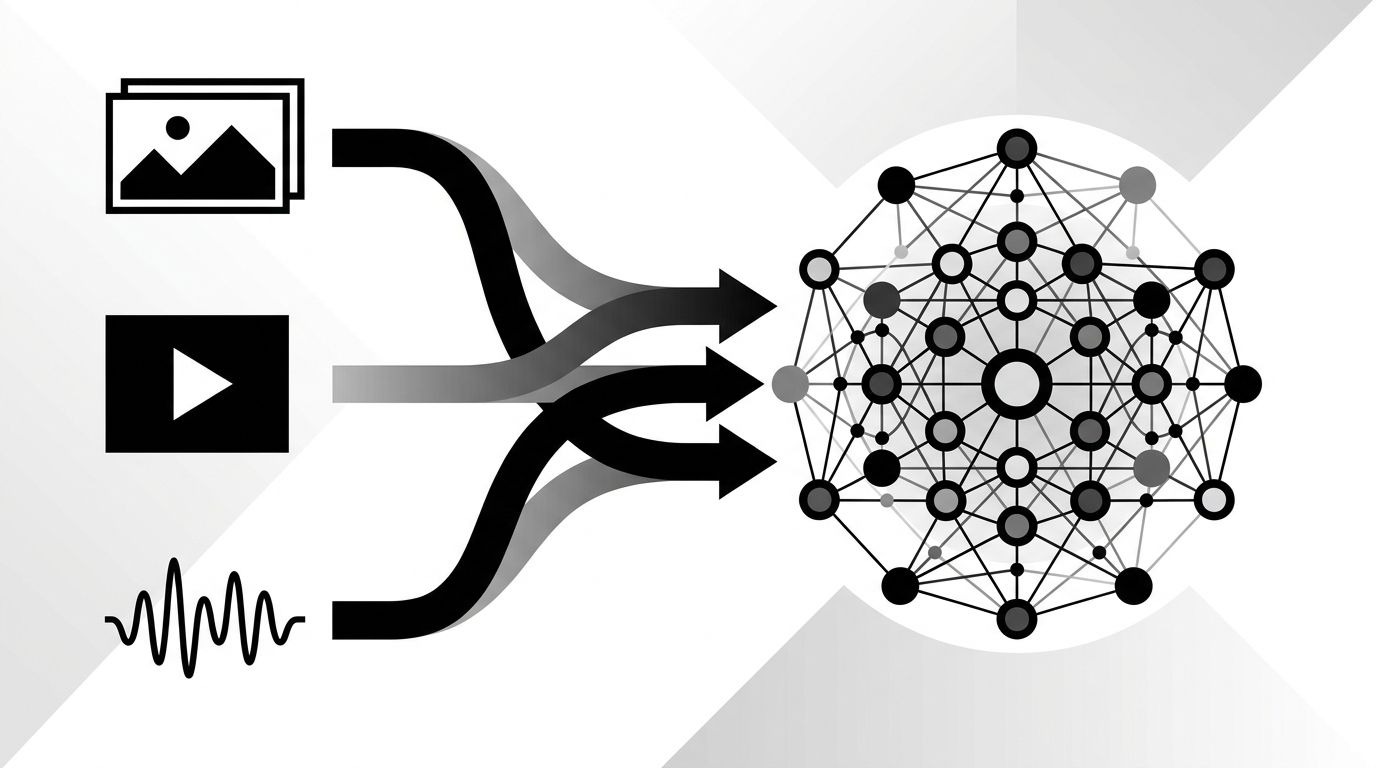

Multimodal Is Already Here

Every frontier model shipped in the last 18 months processes images, audio, and video natively. GPT-4o, Gemini 1.5, and Claude 3.5 Sonnet all read image content at training time and at retrieval time. That means product photography, diagrams on landing pages, and YouTube video transcripts feed directly into how a model represents your brand.

The practical consequence: if your visual assets are thin, generic, or missing alt text, you're invisible at a layer most brands haven't even started measuring.

Three Multimodal Signals AI Models Actually Use

Not every pixel matters. Models rely on a small set of structured signals that sit on top of your visual assets.

- Alt text and captions. These are the most direct textual interpretation of an image. AI models weight them heavily because they give explicit human intent over a visual.

- Video transcripts. YouTube's auto-captions, Vimeo transcripts, and podcast show notes get indexed and cited. Long-form video content with clean transcripts often out-performs written content on recall.

- Image embeddings. Models generate their own vector representations of images, so visual similarity matters. Two products that look alike can get merged in a model's representation even when the brand signals differ.

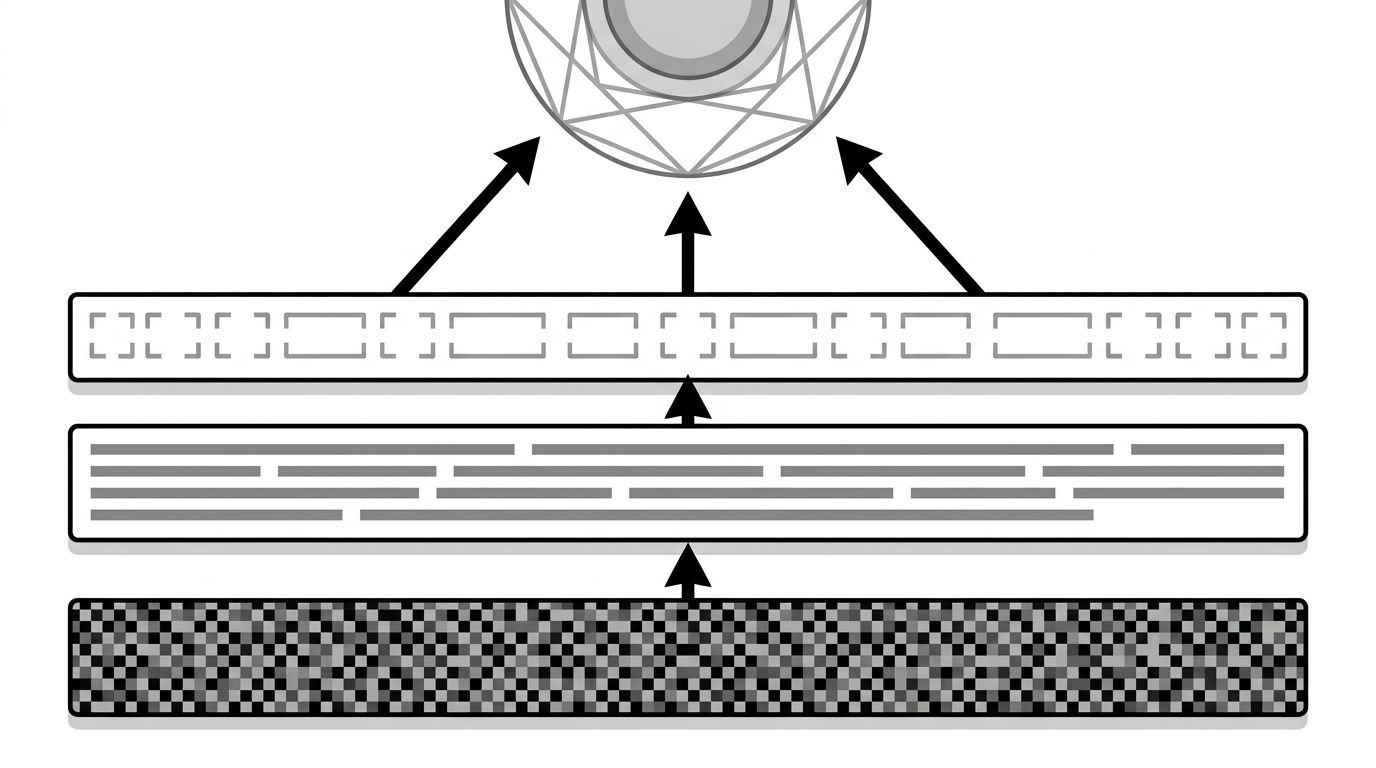

The diagram below shows how these three signals stack into a model's brand representation.

Get any one of these wrong and your brand's signal strength drops. Get all three right and you start showing up in queries your competitors don't know exist.

Where Brands Are Leaving Signal on the Table

Three common failures account for most of the gap I see across content audits.

- Generic alt text. "Image of laptop" or "product shot" teach the model nothing. Alt text should describe the specific object, context, and brand relationship. "Geology dashboard showing AI citation tracking across four platforms" is a brand signal. "Screenshot" is not.

- Unlisted video transcripts. Brands record podcasts and webinars, post them, and never publish the transcript. The audio content exists but can't be retrieved by models that rely on text indexing.

- Recycled stock imagery. Stock photos are in every training set thousands of times. They carry zero brand signal. Original photography, even imperfect, teaches the model something.

Our guide on content formats AI responses favor goes deeper on how different asset types get cited. The quick takeaway: original multimodal content with rich text context is the most underpriced asset class right now.

How to Make Your Visual Content Legible to AI

Treat every visual asset as a text problem first. Models still read text better than pixels.

- Write alt text that names the brand, the context, and the specific content of the image. Target 10 to 20 words per asset.

- Publish transcripts for every video and podcast. Place them on the same page as the embed, not on a subdomain.

- Use descriptive filenames. `geology-citation-dashboard-q1-2026.png` is a signal. `IMG_3421.png` is not.

- Add structured data. ImageObject and VideoObject schema make the relationship between asset and brand explicit. See our structured data guide for the specific fields that matter.

- Caption images in surrounding text. Every inline image should have a sentence before it that explains what the image shows and connects it to the argument.

What to Prioritize First

If you only do one thing this quarter, rewrite alt text across your top 50 pages. It's the fastest win and the lowest effort. Second priority: publish transcripts for the five most-viewed videos or podcasts on your site. Third: swap stock photography on high-intent pages for original visuals.

Track changes in AI mention rate and citation frequency before and after. If you're not measuring those yet, our GEO optimization service can benchmark your multimodal signal strength against competitors in your category.