AI Regulation and Brand Visibility: How Policy Will Shape GEO

How will upcoming AI regulation reshape which brands AI models recommend, and what compliance posture gives your content a retrieval advantage?

Most GEO conversations treat regulation as someone else's problem. That ends in 2026. The EU AI Act's transparency requirements, the FTC's rules on AI-generated endorsements, and state-level sourcing proposals are pushing AI platforms toward a structural preference for content that is easy to attribute, verify, and defend in a legal filing. Brands that publish with verifiable sources, explicit authorship, and traceable data earn a compliance premium in retrieval. Brands relying on anonymized content get filtered, first quietly, then by policy. If you are writing for AI visibility without a paper trail, you are optimizing for a regulatory regime that is already changing.

What Is Actually on the Books

Three regulatory vectors are moving at once, all pointing the same way.

EU AI Act (Article 50 and downstream obligations). Providers of general-purpose AI must document training data sources, disclose AI-generated content, and label synthetic outputs. Downstream effect: AI platforms prefer clearly attributed sources they can cite without exposure.

FTC guidance on AI endorsements. Advertisers cannot rely on AI-generated testimonials or misleading AI recommendations. Brands showing up in AI answers via unsubstantiated claims carry liability, not just the AI provider.

State sourcing and disclosure proposals. California, Colorado, and New York have floated bills requiring LLM providers to disclose citation sources. Where this lands is uncertain; that it is coming is not.

Our piece on AI compliance for regulated industries covers the operational implications. This post focuses on retrieval implications for every brand.

The Compliance Premium in Retrieval

AI platforms are commercial operators. Their incentive is to minimize legal exposure while maintaining answer quality. That creates a structural bias toward content with clear provenance.

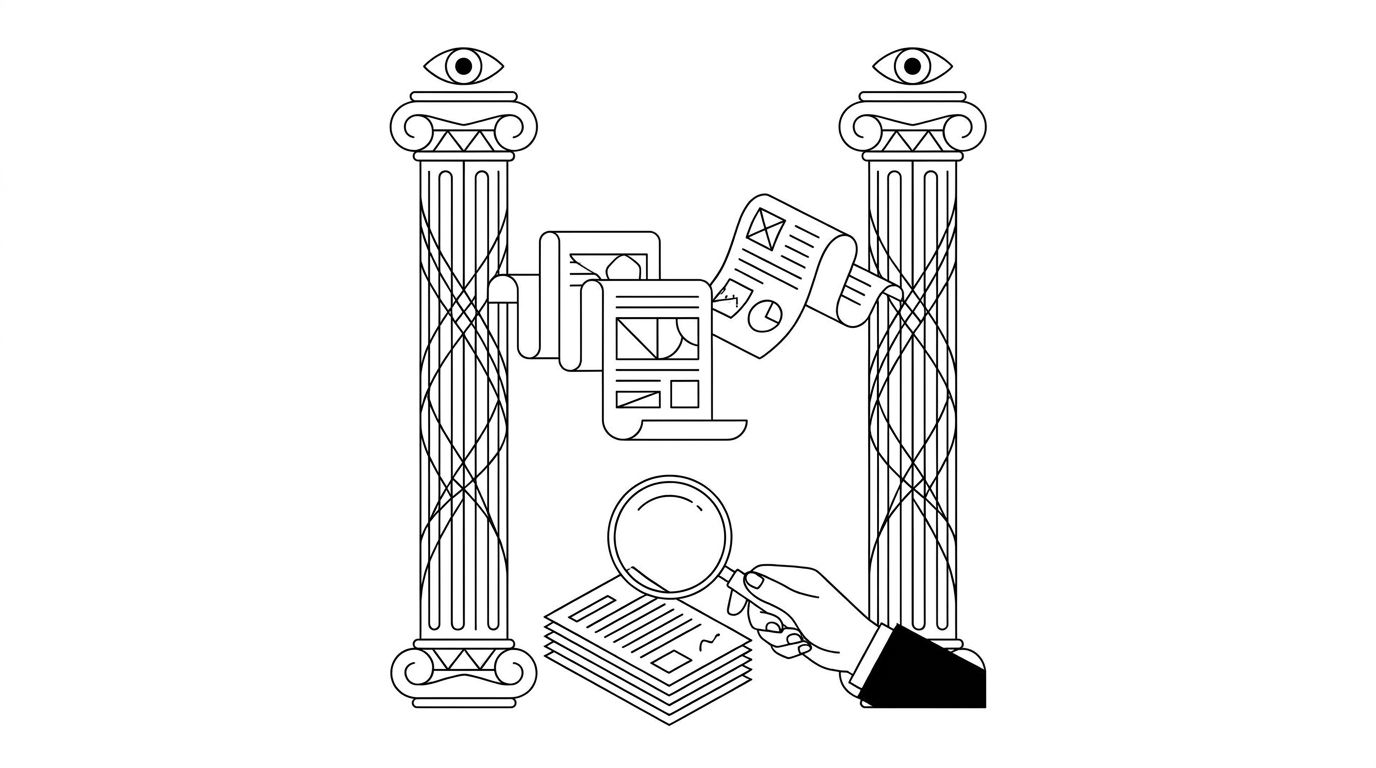

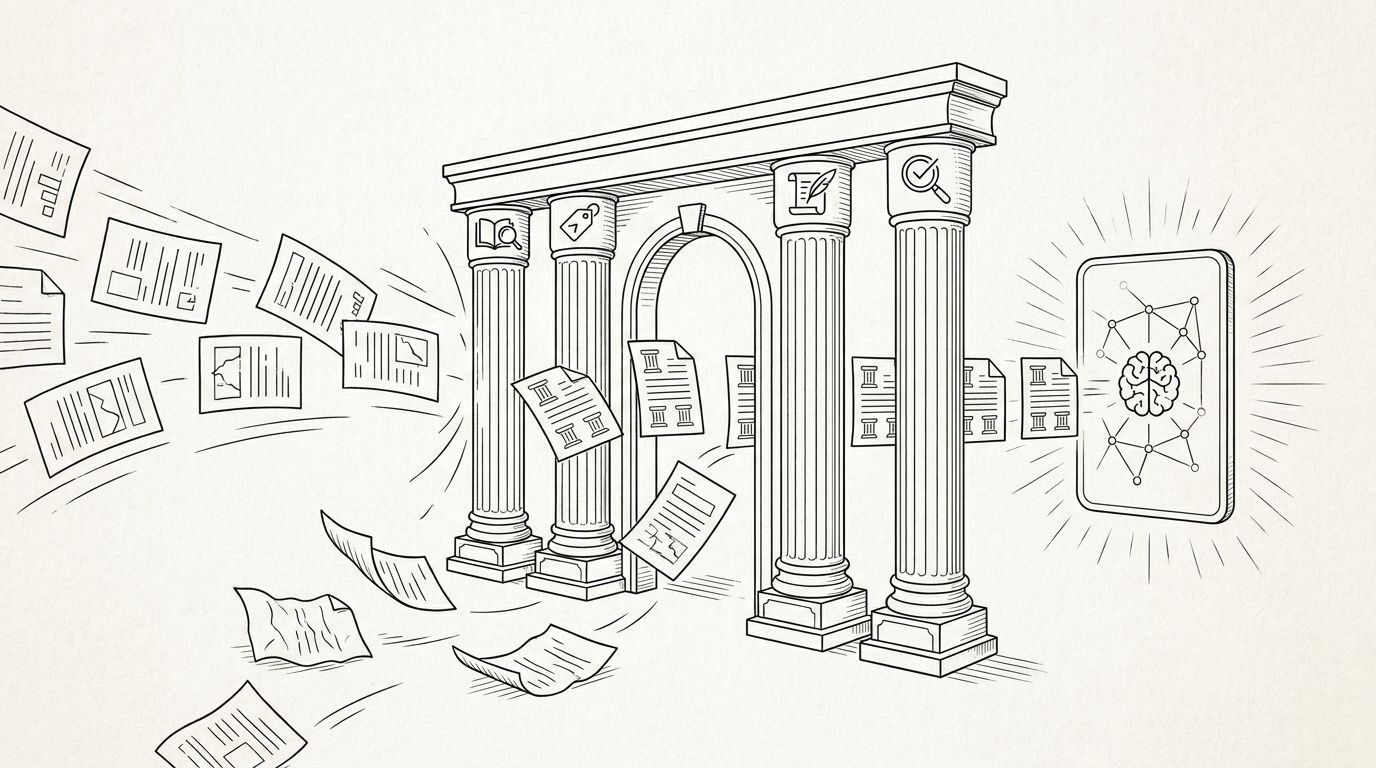

The diagram above captures the filter forming around AI retrieval. Content with four properties becomes easier to cite safely.

- Named authorship. A real person with a verifiable identity, credited on the page.

- Clear sourcing. Named data sources, cited studies, linked references. No "studies show" without a link.

- Timestamped content. Published and updated dates the platform can relay to the user.

- Structured data confirming all of the above. Schema.org Article and Person markup with author credentials.

Brands publishing this way get cited more. Brands that do not get filtered as pressure tightens. Google's quality rater guidelines have moved this way for years, and AI platforms are adopting similar rubrics faster than search did.

What This Means for Your Content Playbook

Three shifts separate brands that benefit from regulatory drift from those that get squeezed.

- Byline every piece. Ghost-written and pseudonymous content becomes a liability. AI models weigh author expertise and credibility as a retrieval signal.

- Cite to a source, not a vibe. "According to Similarweb" beats "studies show." AI models verify named sources through retrieval and cite more confidently when they can.

- Log your data provenance. If your post uses original research, publish the methodology. Sample size, collection period, definitions. Regulators and AI models want the same paper trail.

Brands with well-documented content already rank higher in AI responses than brands with anonymous content. The gap will widen as rules tighten. Our building brand guidelines for AI platforms post has the operational checklist.

Geography Will Fragment Retrieval

Regulation is not global. The EU leads on transparency. The US leads on consumer protection. China leads on content provenance. Expect a near-term split where the same brand is cited differently across regions because the underlying platform applies a different filter.

The practical response: publish content that passes the strictest filter. If your content meets EU AI Act transparency requirements, it passes every other emerging regime. Optimize for the most demanding regulator.

The Enterprise Angle

Enterprises in regulated categories (pharma, finance, insurance, legal) already accept that every public claim needs a source and approval chain. They have not extended that discipline to content written for AI visibility, which marketing owns rather than compliance. That is about to break. The category-specific exposures for finance marketing -- misquoted rates, fabricated approvals, hedge language that AI strips out -- are mapped in compliance risks for AI in finance marketing.

Run AI-destined content through legal review the same way ad claims are reviewed. Not a blocker, a structured workflow. Enterprise brands that get this right will see mention rate rise in regulated categories while competitors get deprioritized. See our piece on AI and intellectual property attribution.

Where to Start

Audit your ten most-trafficked posts against the four retrieval properties (authorship, sourcing, timestamps, structured data). Score each on presence, not quality. Most brands find 60 to 80% of their content fails at least one criterion. Fixing those four is a two-week project with outsized upside.

For a worked example in a regulated vertical, read our insurance case study.